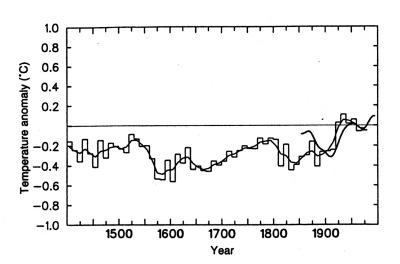

A little while ago, I mentioned here the curious and very large adjustment to 19th century sea surface temperatures based on changing hypotheses about the relative use of wood and canvas buckets. It’s always worth checking whether there’s a hidden agenda for seemingly innocent adjustments. Sometimes my instincts are pretty good. Here’s Figure 3.20 from IPCC SAR [1996] together with the original caption:

Original Caption: Figure 3.20. Decadal summer temperature index for the Northern Hemisphere, from Bradley and Jones [1993]. up to 1970-1979. The record is based on the average of 16 proxy summer temperature records from North America, Europe and east Asia. The smooth line was created using an approximately 50-year Gaussian filter. Recent instrumental data for Northern Hemisphere summer temperature anomalies (over land and ocean) are also plotted (thick line). The instrumental record is probably biased high in the mid-19th century, because of exposures differing from current techniques (e.g. Parker, 1994b). [my bold]

Here the instrumental record in the 19th century fits sufficiently poorly with the proxy reconstruction that it requires explanation. One wonders whether this might have contributed even a little to the re-tuning of 19th century SST results. Especially when the bucket adjuster (Folland) is closely affiliated with the proxy proponent (Jones, who is also the primary author of the land temperature data set.) The bucket adjuster is then the lead IPCC chapter author (and is still lead author in IPCC TAR), so it’s all pretty incestuous. The "validation" for the various multiproxy studies is claimed because they supposedly track last half 19th century results a little bit. But doesn’t this show at least enough prior tuning to affect cross-validation statistics? The nuance is important: I’m not arguing the adjustments per se (at least for now), but whether the tuning process needs to be considered in defining statistical significance benchmarks.

26 Comments

When the data seem anomalous, and you are looking for an omitted variable or variables to resolve the anomaly, it is very easy to think of a possible variable that pulls in the direction that explains the difference. But you should be considering all of the relevant variables that could pull in either a positive or negative direction. What about this consideration? Ships driven by sail power might follow different paths relative to those later that could largely ignore where the winds blew. And SST is affected by wind/ocean currents. Thus it may have been the changeover to steam power in the latter half of the 19th century that caused an anomaly in measured SSTs. If this were the real reason, then the pre-steam ocean temps could not be compared to the more recent ones, because they are apples and oranges.

It would be really interesting for someone to parse through the SST data and check for how this is handled. It seems pretty obvious that people should look at steam versus sail; I don’t recall seeing it mentioned explicitly, but I haven’t examined the temperature methods other than in a very cursory way and there could be detailed discussions of this somewhere. Steve

Have you done any work to audit the surface temperature record? Sounds like something you would be suited to. If the records are divided into good and bad series on a priori grounds, is there a difference in temperature trends between the two? Have you looked into the correction for the urban heat island effect?

Steve: I agree that these records need to be examined. But it’s a big job, other people are interested in the issues already and I’ve got a huge amount of unfinished business already in the proxy area. Also a lot of necessary station data is unavailable so it may be hard to get the necessary traction.

John Hekman has made an important observation: if the data seem anomalous …..

In the mining business this usually points to an orebody but if we followed the procedures he then discusses afterwards, that orebody is made to dissappear, on the basis that the computed results do not match the expectations of the model etc.

I venture the heresy that climate scientists are not too familiar with the scientific method, and if they are, it is one of a local applicability, rather than universal.

If anyone with skills in C++-programming is interested in helping auditing the surface temperature record, you can make a contribution. I have developed a program to correct temperature records from weather stations in a region. Correct for non-climate effects like the urban heat island effect, that is. The program seems to be working quite well, but a few details need to be added and it needs to be more bug tested. Are you willing to help?

In economics, the way the scientific method is employed is to specify a complete structural model of demand and supply interaction as a theoretical matter. Then you find a way such as multiple regression to measure the parameters of the model, and you accept the results as confirming or refuting the theory behind the model. What Mann et al. are doing is a reduced form model. It contains variables that affect temperature, but the principal components procedure is really a computerized fishing expedition that looks for correlations, or “mines for hockey sticks”, as Steve so aptly puts it. The Mann procedure most certainly is not a scientific test of a model that is based on firm concepts from the discipline. What is most amazing is that the procedure, according to Steve, is one which looks through a haystack, and if it finds one needle, we then say it is a needle stack, not a haystack.

is the rationale for the adjustment purely statistical or are there foundational studies (experiments) to look at how temps differ depending on bucket method.

there are hokey studies of the effect. But they have no information on distributions. And the idea that the entire world changed over its measurement techniques on Dec 1941 when the US entered World War 2 is too ridiculous for belief. There was a World War 2 before the U.S. entered and there were bucket measurements after WW2.

Were the hokey studies, experimental (checking different methods directly) or statistical (looking for some correlations or distributions or delta or something)

I had a dialogue with Vincent Gray about this some months ago.

I spend a lot of time studying the history of technologies , among them ships, and also the history of economic development.

There are a whole lot of potential uncontrolled variables in measuring sea surface temperatures.

1. Ship design.

There are tremendous discontinuities in ship design which affect sea water sampling. It is one thing to heave a bucket over the side of a 200 ton wooden schooner going five knots in the Thames estuary and quite another to do it over the side of a steel full rigger of 3,000 tons going ten to fifteen in a gale in the Southern Ocean, or a steel tramp steamship of 1,500 tons going 8 knots and an ocean liner of 20,000 tons going 20 knots in the North Atlantic during a winter gale. Height from the water would vary from a couple of feet to twenty feet or more, for starters. How does anyone hang on to a bucket thrown from a sailing ship going fifteen knots in a full gale in the Southern Ocean with waves of fifty feet? Answer: you don’t – you either lose the bucket or the thing yanks you over the side. How conscientious were sailors and officers in the face of these practical difficulties?

How often did they scoop up a bucket ful from the scuppers rather than risk life and limb throwing a bucket over the side – and risk losing the bucket which would be docked from their meagre pay (especially on British ships). Did it only get done in calm weather and not in stormy? Some areas of ocean are calmer than others and some stormier – how did that affect sampling given the difficulties involved in stormy weather?

Sampling Technique

I imagine the switch from bucket to inlet water was done gradually. Motor ships draw in cooling water continuously. You don’t need cooling water for a steam engine. They do need feed water for the boilers. Doe these different requirements affect how close the inlets were to contaminating sources of heat on board ship i.e. steam versus motor ships? Cooling water for a motor ship is drawn in continously. I imagine you only draw in feed water for steam boilers intermittently, as the steam is recycled for reuse as feed water. Someone would have to know how many motor ships versus steam ships there were in any given year to begin to get a handle on this and a whole lot about naval architecture and the practice of running steam versus diesel engines.

The problem with cooling water inlets is that as ships grew in size, as they did, the inlet would presumably be deeper and deeper in the hull. The samples would thus be coming from deeper and deeper in the water column. Someone would have to look at design drawings to confirm where the inlets were typically placed and then gather data on the average size of motor vessels to figure out where, on average, in the water column, samples were coming. You would also have to have an idea of how temperature changes with depth, something which would vary with time of year and place, and what the state of the weather is (how much mixing is going on due to wind and wave action) I should think.

The study which looked at the changeover from buckets to engine inlets found that there was an upward bias with the inlets. In view of the argument in the previous paragraph, I would have expected a downward bias.

Quite apart from that, sea lanes have changed:

1) Because sailing ships followed routes determined by wind systems (Trades, Westerlies etc) whereas steam and motor ships took and take more direct routes. Clearly the the areas of ocean being sampled are different between the sail and steam eras.

2) Because as the world economy developed trade patterns changed, so new routes were pioneered, some old ones were used more and some less etc. Again the areas of ocean sampled altered with each new trading pattern.

The number of observations in each year would have changed according to the number of ships doing the sampling. This would have been affected by several things: cyclical changes in trade governed by economic cycles; the overall increase in trade as the world economy grew; wars and convoying versus peace and freedom to choose the route; the increase in the average size of ships (especially in the second half of the last century).

What noise or bias could these factors have introduced into SST data? For example, it may be that we have fewer measurements coming from a smaller fleet of ultra large ships in the last 30 years or so, versus many more measurements from a larger fleet of small ships a century ago following radically different average routes, with the latter sampling much more in storm conditions than the latter and much deeper in the water column.

It didn’t seem, from my dialogue with Vincent, who knew something of the attempts to clean up SST data, that anyone was even aware of these various potential sources of bias, never mind attempted to control for them.

PS I hope this comment doesn’t get removed like the others.

Mike,

The comment spam software has a very low threshold of the number of comments that a new visitor can post per day.

It seems to adapt on a day by day basis.

REactions to 10

A. Who is Gray and what was the substance of the discussion?

B. Steam ships do need continuous cooling water. They need a lot MORE than a motor engine craft. This is because the Rankine (normal steam engine) cycle is closed (steam is condensed), whereas the Diesel (or Otto) internal combustion cycles release vapors at the end of a piston stroke.

C. Yes, feedwater is needed for the steam engine for makeup and yes the need is intermittent. (a still will be run, which requires input and cooling, but it is so sized that it is not running continuously).

D. The “storminess” thing might be a part of the offset in intake/bucket methods (of course it could vary by geography). Similarly differences in tendancy to gundeck (cheat on logs) could give a temp bias and might be part of the offset corection and would vary by lattitude (colder, more likely to gundeck, I guess).

E. Despite all the possible confounding factors (possibly sizable in context of small changes inherent in GW), I’d emphasize that this is not a wacky or “stretched” proxy. Sailors have lots of good reasons to measure temperature (engine efficiency monitering, oceanographic research, navigation, antisubmarine warfare, etc.) It’s alot better than tree rings, guys. Let’s be thoughtful and analytical about possible confounding errors. But also be aware that there may be signal to be extracted (worth trying).

F. REgarding differences in routes of steam and wind ships, this seems like something that is easily accounted for by using grid squares. There may be differences in number of points in a grid, but no reason to be worried about the bulk tendancy to be in different places itself (as if you just averaged the measurements without geography).

G. Deepening drafts over time might have an impact on the measurement (or other factors like speed of the water in the pipe, quality of insulation, etc.) THis is worth looking at and thinking about and publishing papers on, but I stick with point in (E). Don’t assume it is impossible to find useful signal because of control worries.

Vincent Gray is an expert reviewer for the IPCC. He is a sceptic.

http://www.warwickhughes.com/gray04/

His comments on the anomalies in the Siberian land temperature series are particularly interesting. There is an upward discontinuity coincident with the collapse of the Soviet Union which is not found at adjacent sites e.g. Spitsbergen. Removing this anomaly leads to average global temperatures declining in the latter part of last century.

B makes sense.

Here’s a question: as marine steam engines went from simple, to duplex to triple compound to quadruple compound to turbine, what happened to the cooling requirement and the feedwater requirement and how did that affect the intake?

C question then becomes at what point do you take the measurement – at mid stream one would hope – as with a urine sample?

D makes sense to me

E I agree it is not wacky and there may be a signal to extract. The question, as always, is how do we separate the signal from the noise.

How motivated sailors are to sample properly if at all will depend on a lot of things. Naval ships with military discipline are one thing; clapped out British steel sailing ships in the early 1900s, run on a shoe string by groups of small investors (old ladies in Llangollen for example – and that is not a joke, but a fact ) and crewed by worn out over age captains with underfed and overworked crews kept under control by bucko mates would be less than ideal samplers. The question is: the relative importance of different quality samplers in the samples overall and for specific grids in particular.

Measurements from 1890 to 1930 in the South West Atlantic, the Southern Ocean and the South East Pacific would have been taken by a combination of highly efficient German, French and Finnish sailing ships and mostly clapped out, poorly run British sailing ships, of which the latter were the great majority until 1918.

Re F: I should have thought the numbers of observations per grid square could matter. Once the sailing ships went there would have been few observations in squares in the Southern Ocean, for example, or in the South West Atlantic. How many observations are sufficient for a valid measurement for the square, especially given the difficulties of sampling in these squares to begin with (due to bad weather, crews with better things to think about, poor crews)? In squares which could have sampling problems, wouldn’t you wish the sample to be as large as possible?

Re: G I agree about the need for study. At no point did I say sea surface temperature measurements should be ignored. My message is that people have been taking them at face value, the same way they took land surface measurements at face value before urban heat islands, changes to sites etc were recognised as possible sources of bias. My comments are intended as a heads up to examine SST data more closely for possible biases and adjust for them.

This is really important because the geographic coverage of SST measurements is so limited and values for the the uncovered squares are estimated by extapolating from adjacent squares for which there are measurements.

13B: I expect that over time, the engines got slightly more efficient. But this is a heat cycle. Carnot (or Rankine) rules. So don’t expect big changes. A bigger effect than efficiency would be changes in the size of the engine, needing more coolant. But then you just build a wider pipe. If you want, you can also get into the seawater pump design (how it evolves and affects velocity.) However in any case, it’s all unlikely to matter much as the water is moving pretty damn fast. I think other factors like length of run of the piping and especially the draft differences are more likely to affect the answer. (the real answer is I don’t know. But that’s my thoughts).

13 C. By intermittent, I mean the evap or still is running 8 hours out of 24. Logs are likely hourly. (the whole issue is irrelevant since you have seawater to the condensers always.)

13G and overall: I think it is readily acknowledged that marine temps are likely to have more error for all the reasons above. Not a brainstorm. Of course there are issues with the station data as well, but I would expect them to be less than marine data (other than that takne explicitly for oceanogrpahic/meteorological reasons). Would expect a 1 degree error bar to move to 2 degree one (or something like that). I can’t recall, but that may even be explicitly done in the combinations (higher error bar on the sea than on the land).

One thing I don’t understand. You are talking about feedwater for steam engines and the assumptions seems to be that seawater would be used. But surely seawater is too corrosive to use as feedwater, isn’t it? Shouldn’t the ships be saving rainwater to use as feewater for the boilers? Or is there some way to use seawater without a buildup of salt and precipitants?

See 12.C. and then look up “still” in the dictionary. 😉

RE: 15

The early marine steam engines used sea water. They could do this because the boiler pressure was low enough that steam created did not contain salt which could contaminate the engine. The boilers were made of wrought iron which is much more resistant to corrosion than mild steel. The latter was not used until the last quarter of the 19th century when the Bessemer and Open Hearth processes made it economical enough to use.

The boilers of marine engines were blown off to reduce salinity when it reached a critical level. On the Great Britain’s boilers this was done when the boiler water reached three times the salinity of sea water.

I said that steam engines did not require cooling water. This is strictly true as you do not cool the cylinders as you do with an internal combustion engine. It would condense the steam and lower the pressure on the piston, the opposite of what you want. This was actually done with the very earliest “steam engines” such as those of Newcomen and Savery. In them you injected steam into the cylinder and then sprayed it with water to create a vaccuum. The piston than descended under the force of gravity. They were really “atmospheric engines”. True steam engines, ones in which the piston is driven by steam pressure, were invented by Watt when he introduced the separate condenser.

However, I forgot about the need for cooling water to condense the steam after use, a fact which TCO quite rightly reminded me of. I think water was used to cool the bearings as well.

These engines were used in ships right until WWII. The Liberty ships which were made in enormous numbers during the war were powered by reciprocating steam engines of this type.

They were Watt style walking beam engines, which operated at very slow speed e.g. the engines of the Great Britain ran at 16 revolutions per minute. If you visit San Francisco, go and see the Ruben James, a Liberty ship from WWII. It has triple expansion machinery which they occasionally steam. I used to watch similar engines in the cotton mills when I was a boy. The massive walking beams were something to see.

I suspect that distillation equipment did not come in until the beginning of the 20th century when the introduction of steam turbines required higher steam pressures. However, steam pressures were raised quite a bit for reciprocating machinery from 1870 on, so it is possible it was introduced earlier. I will have to check.

An important thing to examine is the routing of the feed pipe to the boiler. How close did it come to heat sources (e.g. boilers and fire boxes , boiler flues and the feed water pre-heater) and where was the thermometer situated (e.g. upstream or downstream of the heat sources or adjacent to them)? This would have probably varied from design to design. At this stage we cannot discount the possibility that the variance introduced by design differences approaches the anomalies in the signal we are trying to detect.

When did the switch from bucket to inlet sensor actually happen? Ocean liners were already 20,000 tons by the late 19th century. Given the height of the deck or even a loading port above the water, and the speed of these ships (already approaching 20 knots) bucket sampling would have presented considerable practical difficulties. It may be that inlet sensors came into use much earlier than is apparently being assumed (WWII).

Given that the feedwater was pre-heated, presumably to a desired temperature, it seems reasonable to assume that fixed thermometers could have been used to measure the temperature of feed water from quite early on. Also, given the need to pre-heat feed water, it is unlikely that feed water pipes were insulated and there would have been an incentive to deliberately route them close to waste heat sources in order to reduce the cost and fuel consumption of pre-heating. Whether or not these are real considerations versus mere conjectures would require research to determine.

For a normal steam engine, the feed water is not where you measure sea water temps. you measure it at the inlet to the main condenser (on the seawater side, not the feedwater side).

I don’t know how long open cycle steam engines were operated at sea. I think we’ve been closed cycle for a long time, though. In any case, sure the feedwater is often routed through the exhaust gas stack to do some preheating, but that’s no reason not to measure feedwater temps at the inlet to the system as well as at the boiler inlet. But a normal Rankine cycle condenses feedwater with seawater doing the cooling. Seawater temps are measured on the inlet side of seawater/(other fluid) heat exchangers…

TCO

RE: 18.

It sounds as if you have served shipboard. On what kinds of vessels and when?

Ocean going steam ships have been around since the 1830s. That is a very long time ago, during which all sorts of variations on pipe routing and where you measure temperatures could have been tried. I strongly suspect that for the first 70 years of steamship history, there were no condensers. Ergo, where was the inlet water temperature taken then? It couldn’t have been at the condenser inlet if they didn’t have them. On small tramp steamers, where high pressure would not have been an issue, since their service speeds were so low, it may have lasted even longer. And there were lots of those around.

I shall have to do some sleuthing in the history of naval architecture, I guess. Time for some data 🙂

Cheers,

Mike

I have been looking at the handling of SST data.

The gist of what I have learned is:

There is an awareness of the bucket vs engine intake and depth of intake issues, but only the former has been investigated and the data adjusted.

I find this remarkable, as bucket vs engine has to be a minor bias relative to depth in the water column at which the measurement is taken. Perhaps researchers picked on it for the way in which it was expected to help adjust the data in a direction they liked.

Variability in the depth at which the engine intake is beneath the water surface is said to be one to five metres. I wonder about that. As a teenager I sailed a dinghy on Milford Haven in South Wales. The Haven is one of Britain’s two ports for super tankers. I would say that the difference between the waterline at full load and empty on a supertanker is quite a bit more than 5 metres. However, that was 40 years ago, so I am prepared to be corrected.

Research has also been done on bias in bucket measurements due to time of year and whether the measurement was taken with the thermometer in or out of the water.

Two international colloquia have been held on SST data. I quote selected abstracts below.

Mike

Southampton Oceanography Centre Study

http://www.cdc.noaa.gov/coads/advances/kent.pdf

Ships that use buckets to measure the SST are more likely to report better quality air temperature measurements – better exposure of the temperature sensors?

Buckets give much more reliable SSTs than engine intakes,

but may be biased when the air-sea temperature difference or surface fluxes are large.

2 nd International Workshop on Advances in the Use of

Historical Marine Climate Data (MARCDAT-II)

17-20 th October 2005

Hadley Centre for Climate Prediction and Research, Met

Office, Exeter, U.K.

ABSTRACTS

Version 1, 11 th July 2005

http://www.cdc.noaa.gov/coads/marcdat2/marcdat2abstracts.pdf

MARCDAT-II abstracts Version 1

Theme 1: oral

Biases in Modern in situ SST Measurements

John Kennedy

Hadley Centre for Climate Prediction and Research, Met Office, U.K.

There exist systematic differences between sea surface temperatures measured by

drifting buoys and moored buoys and between these and the various methods

employed by the Voluntary Observing Ships. Changes in the proportions of

observations taken by each of these methods can lead to time-varying biases in large

scale averages of SST and therefore to misestimates of recent global trends. Using

metadata from ICOADS and WMO publication 47 the relative biases due to bucket

measurements and engine room intake measurements from ships are evaluated and

compared with the measurements from drifting buoys. Between 1970 and 2004 the

proportion of observations in ICOADS that comes from drifting buoys has risen from

0% to over 60%. Because drifting buoys on average report cooler SSTs than ships this

increase in relative numbers implies that we may be underestimating the recent

warming of the ocean’s surface.

MARCDAT-II abstracts Version 1

Theme 1: poster

The use of GIS in reconstructing old ship routes

Frits B. Koek, KNMI, The Netherlands

In 2003 the EU project CLIWOC was successfully completed with the delivery of an

impressive final report and an even more impressive data set. CLIWOC contains data

from old ship logbooks (1750-1854) that needed a range of corrections and

adjustments before they could be used for further research. One of the problems was

the so-called “shifting prime meridian’. The current prime meridian (Greenwich) was

only accepted internationally in 1884, during an international conference in

Washington. Before that time, a wide range of alternatives were used. In CLIWOC

not less than 646 different prime meridians were identified. Another problem was the

inaccuracy of the determination of the ships’ positions. Although sailing around the

globe, the precision of the ships’ navigation was not high. Many ships travelled along

the coast, to keep in touch with the land, but increasingly more ships went on the high

seas without any point of reference along their route at all. Small daily inaccuracies in

estimating their positions increased the error towards the end of their voyage. Not

knowing exactly where they were, captains continued on their method of dead

reckoning, even if they might end over land on their navigational charts. During the

CLIWOC project, a methodology was developed to deal with these problems, making

use of GIS software (i.e. ArcMap). This methodology will be presented at the

workshop.

MARCDAT-II abstracts Version 1

Theme 2: poster

Improved analyses of changes and uncertainties in sea surface temperature

measured in situ since the mid-nineteenth century

N.A. Rayner, P. Brohan, D.E. Parker, C.K. Folland, J. Kennedy, M. Vanicek, T.

Ansell and S.F.B. Tett

Met Office Hadley Centre for Climate Prediction and Research, U.K.

A new flexible gridded data set of sea surface temperature (SST) since 1850 is

presented and its uncertainties quantified. This analysis (HadSST2) is based on data

contained within the recently created ICOADS data base and so is superior in

geographical coverage to previous data sets and is shown to have smaller uncertainties.

The issues involved in analysing a data base of observations both measured from very

different platforms and drawn from many different countries with different

measurement practices are introduced. Improved bias corrections are applied to the

data to account for changes in measurement conditions through time. A detailed

analysis of uncertainties in these corrections is included by exploring assumptions

made in their construction and producing multiple versions using a Monte Carlo

method. An assessment of total uncertainty in each gridded average is obtained by

combining these bias correction related uncertainties with those arising from

measurement errors and under-sampling of intra-grid box variability. These are

calculated by partitioning the variance in grid box averages between real and spurious

variability. From month to month in individual grid boxes, sampling uncertainties

tend to be most important (except in certain regions), but on large scale averages bias

correction uncertainties are more dominant owing to their correlation between grid

boxes. Globally, SST warmed by 0.52±0.16ºC (95% confidence interval) between

1850 and 2004, with a warming of 0.59±0.17ºC and 0.46±0.24ºC in the Northern and

Southern Hemispheres respectively. Uncertainties in these trends are dominated by

variability about the trend rather than data or bias-correction errors.

Theme 3: oral

Climatic data from the “pre-instrumental’ period: potential and possibilities

Dennis Wheeler (University of Sunderland SR1 3PZ, UK)

e-mail: denniswheeler@beeb.net

For many climatologists the instrumental period begins sometime in the mid-nineteenth

century. This is not, however, to conclude that instrumental (or other) data

do not exist for earlier periods. In this respect naval logbooks, of which there are more

the 100,000 in the UK from before 1850, are of particular significance. The EU-funded

CLIWOC project, which covered the period 1750 to 1850, has shown that

non-instrumental logbook data can be put to good use and, indeed, there is the

possibility of procuring pressure field reconstructions from such data. Whilst it is true

to state that most logbook climate information from before 1850 is of this non-instrumental

type (wind force, wind direction and weather descriptions) it does not

follow that instrumental data are absent, and one source is of particular importance in

this respect: the logbooks of ships of the English East India Company (EEIC). In

contrast to Royal Navy ships, most of whom did not commit barometric or

temperature readings to paper until the 1830s, from as early as the 1790s officers of

the EEIC used pre-printed logbook sheets that included specific spaces for air

temperature and pressure. Given also that as many as six such vessels a year would

negotiate the oceans between England, India and China, with observations being

made at noon every day, there is represented here a notable volume of climatic data

with a significant geographic range and time span (the Company ceased activity in

1833). Most of the logbooks have survived and are held in the British Library (St.

Pancras). Although the sailings were highly seasonal and timed to take advantage of

the annual rhythm of air flow in the Indian Ocean, these data have much potential but

have yet to be digitised for any more than a small number of 4000 logbooks that are

held in BL. This presentation describes the character, origin and nature of these data

and is made with a view to opening discussions with colleagues to determine the

potential for integrating them into existing, more recent, data sets.

Estimating Sea Surface Temperature

From Infrared Satellite and In Situ Temperature Data

Emery et al

http://www.cdc.noaa.gov/coads/advances/emery.pdf

History of SST Measurement

The earliest measurements of SST were from sailing vessels where the common practice

was to collect a bucket of water while the ship was underway and then measure the temperature

of this bucket of water with a mercury in glass thermometer. This then was a sample from the

few upper tens of centimeters of the water. Modern powered ships made this bucket collection impractical and it became common practice to measure SST as the temperature of the seawater entering to cool the ship’s engines. The depth of the inlet pipe varies with ship from about one meter to five meters. Called “injection temperature” (a thermistor is “injected” into the pipe carrying cooling water) this measurement is an analog reading of a round gauge recorded by hand and radioed in as part of the regular weather observations from merchant ships. Located in the warm engine room this SST measurement has been shown to have a warm bias (Saur, 1963) and is generally much nosier than buoy measurements of bulk SST (Emery et al., 2000). Some research vessels still use “bucket samples” for bulk SST measurements where the buckets are slim profile pieces of pipe with a thermometer built into it.

International Workshop on Advances in the Use of Historical

Marine Climate Data: Boulder, Colorado, USA, January 2002

Marine Data Sets from the UK

David Parker and Simon Tett, Met Office, UK

http://www.cdc.noaa.gov/coads/advances/abstracts.pdf

Abstract

We provide an overview of historical data sets of marine

observations provided by the United Kingdom. The largest UK

input to the new international marine data base was the Met

Office’s Marine Data Bank, which has evolved from punch-card

data since the 1960s and now includes both logbook and

quality-controlled telecommunicated observations from ships.

Observations from buoys are not included. A further

contribution from the UK was over 450,000 newly-digitized

merchant ship observations for 1935-1939. These served to

slightly narrow the dip in global coverage of marine data

around the second world war, especially in the North Atlantic,

South Pacific and southern Indian Ocean. The associated

metadata also confirmed previous estimates of ships’ decks’

elevation used to adjust marine air temperatures. However, an

estimated 25 million marine observations made since 1850

remain undigitized in national archives. The CLIWOC project,

in which pre-1850 data are being processed, may act as a pilot

project for the digitization of this major resource of marine

climate information.

International Workshop on Advances in the Use of Historical Marine Climate Data:

Boulder, Colorado, USA, January 2002

Climatological Database for the world’s oceans 1750-1850 (CLIWOC)

David Parker, Met Office, UK

The immediate purpose of the CLIWOC project is to digitise and quality control

data for the period 1750-1850 from ships’ logbooks in British, Dutch, French,

Spanish and Argentinean archives. Thus, CLIWOC will produce and make

freely available for the scientific community a daily oceanic climatological

database for that period. Digitisation began in late 2001. It is intended that this

information be used to extend and enhance existing oceanic-climatic databases.

The ultimate aims of CLIWOC are to realise the potential of the data to provide

a better knowledge and understanding of oceanic climate variability. Through

the preparation of summaries and derivative diagnostics, it will be possible to

determine some characteristics of oceanic climate change and variability at a

range of time scales. An attempt will be made to prepare a reliable North

Atlantic Oscillation (NAO) index for the period 1750-1850: this will enable

assessment of the influence of the NAO on European climate in that period.

CLIWOC also aims to stimulate similar data-development projects and research using additional historical marine data not included in the CLIWOC project.

CLIWOC ends in November 2003.

CLIWOC is funded under the European Commission Framework 5 initiative.

The participants are:

“⠠Universidad Complutense de Madrid, Spain

“⠠University of Sunderland, U.K.

“⠠Royal Netherlands Meteorological Institute

“⠠Incihusa, Mendoza, Argentina

“⠠University of East Anglia, U.K.

The Observers/Reviewers for CLIWOC are Henry Diaz (NOAA, USA) and

David Parker (Met Office, U.K.).

Bias Adjustments, Quality Control, and Analyses of Historic SST

Thomas M. Smith and Richard W. Reynolds

National Climate Data Center

151 Patton Ave., Asheville, NC 28801

Abstract

Because of changes in SST sampling methods in the 1940s and earlier, there are

biases in the earlier period SSTs relative to the most recent fifty years. Published results

from the UK Met Office have shown the need for historic bias corrections and developed

several correction techniques. An independent bias-correction method is described, based on

night marine air temperatures and SST observations from COADS. Because this method is

independent from methods proposed by the UK Met Office, the differences indicate

uncertainties while similarities indicate where users may have more confidence in the bias

correction. The new method gives results which are broadly consistent with the UK Met

Office bias-correction estimates. The global and annual average corrections from both are

nearly the same. Largest differences occur in the Northern Hemisphere in winter, when our

estimates are larger than the Met Office estimates.

Improved quality control (QC) methods are also developed for COADS data and

applied to historic SST. The improved QC compares observations with a statistically optimal

analysis, discarding values that differ greatly from the analysis. A statistical analysis of QC

historic SST data is produced from the 19 th century to 1997. The analysis and comparisons

with other analyses are discussed. Error estimates for the analysis show that sampling error is

greatest before 1880, when analyses may not be reliable for most regions. Between 1880 and 1941, uncertainties in the bias correction are a major part of total uncertainty. Analyses are most reliable beginning in the late 1940s, when sampling is relatively dense and no bias

correction is applied.

International Workshop on Advances in the Use of Historical Marine Climate Data:

Boulder, Colorado, USA, January 2002

Creating the HadSST Gridded in situ SST Analysis

David Parker, Met Office, UK

Grid-box-average sea surface temperatures are adjusted to compensate for the effects on

variance of changing numbers of contributing data. The technique damps monthly average

temperature anomalies over a grid box by an amount inversely related to the number of

contributing observations. Thus the reduction of variance corrections is greatest over data

sparse regions. After adjustment, the data are unaffected by artificial variance changes which

might affect, in particular, the results of analyses of the incidence of extreme values. The

effects of our procedures on hemispheric temperature anomaly series are small. Our

technique is described in detail in Jones et al. (J. Geoph. Res., 106, 3371-3380 (2001)) in

which land surface air temperatures are also treated. The improved data were used in an

analysis of extremes by Horton et al. (Climatic Change, 50, 267-295, 2001)) and show little

evidence of changes in the incidence of extremes after trends in global-average sea surface

temperature have been taken into account.

International Workshop on Advances in the Use of Historical Marine Climate Data:

Boulder, Colorado, USA, January 2002

Construction and Testing of the Globally Complete HadISST1 Data Set

Nick Rayner, Met Office, UK

We present the Hadley Centre’s sea-Ice and Sea Surface Temperature (HadISST1) data set.

HadISST1 replaces the Global sea-Ice and Sea Surface Temperature (GISST) data sets, and

consists of monthly globally-complete fields of SST and sea-ice concentration on a 1° grid

from 1870 to 1999. SST anomaly fields are reconstructed using reduced-space optimal

interpolation (RSOI). Satellite-based SSTs are blended smoothly with in situ SSTs with the

aid of RSOI. As in GISST, we reconstruct the global field of long-term “trend” separately

from the remaining SST variability. The local detail of SST is improved by superposing

quality-improved gridded SSTs. Satellite microwave-based sea-ice concentrations are

compensated for the impact of melt ponds and wet snow, and the historical in situ

concentrations are made homogeneous with the modified satellite record. The procedure for

estimating ice-zone SST has been refined. HadISST1 SSTs are more coherent in time than those in GISST, and are of comparable quality to several shorter published SST analyses.

The Antarctic Circumpolar Wave is well reproduced since the 1980s. We verify HadISST1against other SST data sets and use diagnostics from atmospheric model simulations to show

that HadISST1 is an improvement on GISST.

Oh, I forgot to say that another area of SST data handling suitable for Steve’s math and stat skills is the method of adjustment for bias due to different sample sizes between grid squares and the method of extrapolating data from squares for which there are observations to ones where there are not. This is a particular issue for the Southern Hemisphere where most of the world’s oceans are. Given the capacity of the oceans to absorb radiation compared to land, and thus buffer a forcing, this is an important issue.

The extrapolations used are purely statistical. That is, so far as I have been able to discover so far, there is no attempt to model how different parts of the planet’s surface are differentially affected by solar or greenhouse forcing when extrapolating from one grid or set of grids to another. We know that during the Medieval Warm Period in Western Europe, winters were colder in China (Lamb) i.e. we know that such variability has occurred. I seem to remember that during the Little Dryas, temperatures in the North Atlantic and in Antarctica were going in opposite directions, so this issue of variable regional response to forcing is significant.

Re 19:

I served on conventional and nuclear steam vessels.

The 1830s steamers would be a bit irrelevant to the issue of feewater versus cooling intake, though. I don’t know the history of open cycle steam at sea.

RE: 19

I wrote:

“I strongly suspect that for the first 70 years of steamship history, there were no condensers.” I meant distillation equipment, not condensers.

All Watt type engines had condensers, so TCO’s point about taking temperature readings on the sea-water side of the condenser stands as a factor.

As to TCO’s comment that the 1830s steamships are not relevant, he is, of course quite right. Why I made reference to them was to demonstrate that steamships have been around a long time and that there were a great many experiments with steam ship machinery before it standardized on triple expansion in the 1890s. The Great Britain had triangle engines, for example. My point still stands, I think: there could have been all kinds of arrangements tried for measuring feedwater temperature; and it is far from clear when this started – it could have been many decades before the date of 1941 quoted.

I came across a statement that engineers on steam ships would use the bucket method to measure the temperature of the water so as to estimate the maximum power that was possible from the engine(s).

I can see where the temperature of the feedwater to the boiler would affect coal consumption and perhaps steam temperature and pressure.

Would the temperature of feed water to the condenser matter for power output?

Any thoughts, TCO?

Mike

Certainly the condenser temperature would matter, though not perhaps as directly via pressure as much as the rate of condensation which, given a fixed engine size, would help determine the power.

The colder your condensation temperature, the more efficient the engine (basic heat cylces…analogous to Carnot, but a bit different shape: Rankine). However, subcooling of the condensate affects the efficiency of the cycle adversely. Draw the diagram (H-s or T-s) of the Rankine cycle and add the complication and you will see that. It’s not an extreme effect. Some subcooling is nescessary anyhoo for practical reasons: to prevent caviation in the feed pump (pushing the liquid into the boiler).

I think there is a bit of a nomenclature issue: feedwater is essentially condensate. There is some makeup water because of steam leaks in the engineeroom, but it is quite small compared to flows of feed/condensate and is added intermittantly. I guess in the olden days they might have added seawater. Would have been pretty harsh on the boiler though…distillation (or reserve tanks) is the norm for a long times now.

It’s not that hard to set up an evaporater to make fresh water with if you already have a steam system in the ship. The steam gives you heating and also allows drawing a vacuum through a venturi-like device called an air ejector. It’s not like distillation was not known for a while know from chemistry/spirits industries.

Thanks Dave and TCO,

What I am getting out of this is that it is there was a significant motivation for steamship engineers to measure feedwater temperatures for boilers and condensers from very early on and to scrupulously record it in order to learn from experience. As soon as Carnot’s results became general engineering knowledge (now when was that, I wonder?) or they figured it out themselves from operational experience they would have been doing this.

It would not only have been the power output that motivated them per se, but also coal consumption. The bigger the difference between the condenser temperature and the steam temperature in the cylinder, the more efficient the engine, no? This motivation would have been especially powerful in the early days of single cylinder engines whose efficiency was so low that most of the cargo was coal fuel and only high paying freight like mail, precious metals and people in a hurry could pay the tab. As double and triple expansion came along, even with the lower coal consumption per hp, the need would have remained as shipping rates fell and the margins on bulk cargoes were slim in comparison to the early high value added ones.

There seems to me a distinct possibility that the bucket-inlet and depth of sampling issues could go back a very long way.

Mike

6 Trackbacks

[…] […]

[…] surface temperature records — Discussed here, here, and at […]

[…] surface temperature records — Discussed here, here, and at […]

[…] The absurdity of Team bucket adjustments had been discussed in two early CA posts (here, here, here ). In March 2007, after publication of Kent et al 2007 showed the prevalence of buckets as late as […]

[…] first discussed this matter nearly two years ago here (see also here) in which I quoted Folland and PArker 1995 as folows: Barnett (1984) gave strong evidence that […]

[…] there is a far more pressing need to wade through SST procedures. My earlier posts were here and here. At the time, I quoted Parker, Folland and Jackson [1995] as […]