The calculation of the PAGES2K regional average contains a very odd procedure that thus far has escaped commentary. The centerpiece of the PAGES2K program was the calculation of regional reconstructions in deg C anomalies. Having done these calculations, most readers would presume that their area weighted average (deg C) would be the weighted average of these regional reconstructions already expressed in deg C.

But this isn’t what they did. Instead, they first smoothed by taking 30-year averages, then converted the smoothed deg C regional reconstructions to SD units (basis 1200-1965) and took an average in SD units, converting the result back to deg C by “visual scaling”.

This procedure had a dramatic impact on the Gergis reconstruction. Expressed in deg C and as illustrated in the SI, it has a very mild blade. But, the peculiar PAGES2K procedure amplified the relatively small amplitude reconstruction into a monster blade with a 4 sigma closing value. Following the Arctic2K non-corrigendum correction, it is the largest blade in the reconstruction (and has the greatest area weight.)

I’ll show this procedure in today’s post.

.

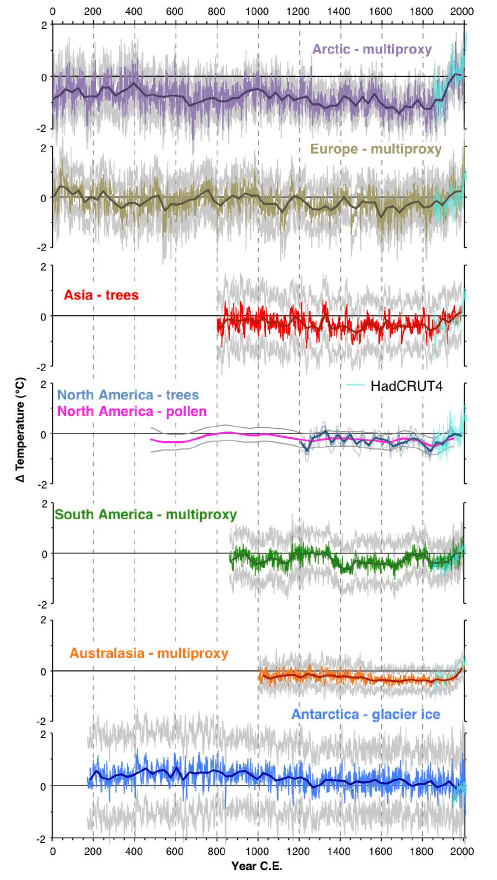

PAGES2K Figure S2

First, here is PAGES2K Figure S2, showing the seven regional reconstructions (the Arctic obviously pre-corrections), The amplitude of the Australasian temperature change estimates (for whatever they are worth) are the smallest.

Figure 1. Excerpted from PAGES2K Figure S2. Original caption: Proxy temperature reconstructions for the seven regions of the PAGES 2k Network. Temperature anomalies are relative to the 1961-1990 CE reference period. Grey lines around expected-value estimates indicate uncertainty ranges as defined by each regional group (Supplemental Information Part II), namely: Antarctica, Australasia, North America pollen, and South America = ± 2SE; Asia = ± 2 RMSE; Europe = 95% confidence bands; Arctic = 90% confidence; North American trees = upper/lower 5% bootstrap bounds (these are inherently narrower than those of many other regions because they are reported at decadal and multi-decadal, rather than annual resolution). Instrumental temperatures are area-weighted mean annual temperatures over the reconstruction domains shown in Figure 1 from HadCRUT4 (ref. 9) land and ocean, rather than the target temperature series used in the regional reconstructions. This facilitates a uniform comparison among regions using a data series that extends to 2010. The actual reconstruction targets for each region are specified in Table 1. Reconstruction time series are listed in Supplementary Database S2.

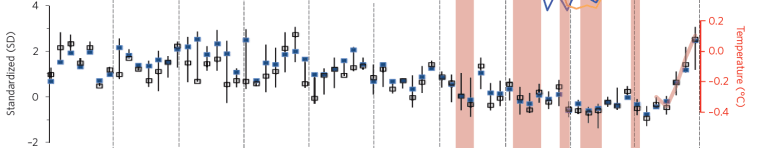

Re-converting to SD Units

It’s hard to understand why the PAGES2K authors would convert series expressed in deg C back to SD units in order to calculate an area average, but that’s what they did. The calculation is not described in detail, but is illustrated in their Figure 4b (and the nearly identical top panel of SI Figure 5.) As I understand their procedure, they first calculated 30-year averages for each reconstruction (ending in 2000) ; then they converted the 30-year average series to SD units (basis 1200-1965) and then calculated an area average – shown in their Figure 4b (and SI Figure 5) as blue dots – see below. This was then converted to temperature (see scale on right axis) by a super-sophisticated statistical methodology described in the caption as “scaled visually to match the standardized values over the instrumental period.”

Figure 2. Excerpt from PAGES2K Figure 4b (period 0-2000). Similar figure in PAGES2K SI Figure 5 top panel. Original caption: Composite temperature reconstructions with climate forcings and previous hemisphere-scale reconstructions. … . b, Standardized 30-year-mean temperatures averaged across all seven continental-scale regions. Blue symbols are area-weighted averages using domain areas listed in Table 1, and bars show twenty-fifth and seventy-fifth unweighted percentiles to illustrate the variability among regions; open black boxes are unweighted medians. The red line is the 30-year-average annual global temperature from the HadCRUT4 (ref. 29) instrumental time series relative to 1961–1990, and scaled visually to match the standardized values over the instrumental period

Regional Panel in SD Units,

PAGES2K did not show the regional reconstructions in SD units – the form in which they contributed to the area-weighted average – an oversight which is remedied below. I’ve also used the PAGES2K-2014 Arctic version and added the average of 2014 versions. PAGES2K emphasized the long-term cooling trend up to the 20th century. Squinting at these reconstructions, I’d be inclined to say that reversal of long-term cooling occurred somewhat earlier in their European and Asian reconstructions than their Arctic reconstruction and that no reversal is yet discernible in Antarctica, much the coldest area. Curiously, the Gergis’ Australia reconstruction has, by a considerable margin, the largest blade, closing at over 4 sigma. Thus, although it has a deceptively mild appearance when expressed in deg C (as in the main SI illustration excerpted above), it has a monster blade as used in the regional averaging. This contribution is further exacerbated by the assignment of weights, as the Gergis’ Australasian reconstruction is assigned the largest area of any of the regions.

Figure 3. Seven regional reconstructions expressed in SD Units, together with area-weighted average (red). The Gergis reconstruction (left column, bottom row) closes at over 4 sigma, The uncorrected Arctic reconstruction had a similarly large blade, but is more muted incorporating corrections to date.

Discussion

This very large contribution from the Gergis reconstruction invites further examination, which I plan in a subsequent post. The original Gergis reconstruction was discussed at length when it was released in 2012. Their screening procedure was examined closely at CA, with Jean S demonstrating that, despite claims in their article that they had used detrended correlation to guard again spurious correlation, they had actually used non-detrended correlation. This led to a non-retraction retraction: the article was disappeared without an obituary or retraction notice. The authors did not acknowledge CA, instead they claimed that they had “independently” discovered the problem at exactly the same time as the CA discussion. Mann and Schmidt both wrote to Gergis supporting ex post screening. Gergis and coauthors (including Karoly) tried to persuade Journal of Climate that they should be allowed to change their description of methodology, but the journal asked them to show results using the described methodology as well and the article disappeared.

The Gergis reconstruction in PAGES2K uses an almost identical network to the retracted article: of the 27 proxies in the original network, 20 are used in the P2K network: out are 1 tree ring, 2 ice core and 4 coral. The new network has 28 proxies: in are 2 tree ring, 1 speleo and 5 coral records. As discussed in my original commentary, there are only two records in this network going back to the MWP – both tree ring records considered in Mann and Jones 2003 without much centennial variability. As in the original reconstruction, the Gergis blade does not arise from multiple long records exhibiting modern values elevated relative to medieval values, but is more like a moustache stapled onto a shaft. I’ll revisit the revised Gergis reconstruction in a separate post.

80 Comments

Deceptively mild appearance in deg C. Monster blade in SD units, weighted by monster area. How they must have agonised before making those choices.

Wow, true mathmagic.

Fingerpainting.

a little blue, a little red, a little more ..

I’ve told others that high level statistical softwares free the mind from the tedium of writing code. Maybe they hold too much power.

>a little blue, a little red, a little more ..

>

>I’ve told others that high level statistical softwares free the mind.

Yes, this math from matrix machines have too much power. What you need is the one basic algorithm to operate on all your data.

🙂 Think there might be a happy medium in there somewhere

Let me get this straight: The Gergis reconstruction, which was not published, because it was found to be flawed, is incorporated into the PAGES2K?

To summarize: they take flawed results, put into the super-duper CLIMO-MATIC blender, pulse it for awhile, then put the result into the super-duper CLIMO-RECONSTRUCTOR, and voila; bobs your uncle; we have a hockey stick.

Someone please tell me I have interpreted this wrong.

“You’ve interpreted this wrong.” [Fingers crossed behind back, and tongue firmly in cheek]

Your interpretation matches mine and it astounds me, likewise. I was not even aware that the Gergis paper was included in PAGES2K until recently. Apparently the hockey stick has powers like the apprentice’s broom.

Science weeps.

It’s a bit worse than that.

The Gergis paper spelled out a methodology – detrending the data before selecting proxy series – designed to overcome one fundamental objection, that without detrending, the choice of proxies would in effect preselect those with a positive temperature signal. Whether or not there were other problems with the paper, the authors chose that methodology, acknowledging the problem of preselection and proposing a solution.

Then when they “independently” (cough, cough) discovered that they had NOT detrended the data before selecting proxies, and that if they followed their stated methodology there was effectively no temperature signal, they had two choices. Say “this methodology, which we chose because we thought it would be the best way of analyzing this data, shows there is no significant temperature trend”, or say “if we do this differently, failing to overcome the problems we already identified, we can still dig out a (positive) temperature signal”.

No prizes for guessing which of those two they tried, reinforcing skeptics’ impressions that climate scientists are only interested in papers that push an alarmist agenda and that they are prepared to keep torturing the data until it confesses.

Who was it that was supposed to detrend the data, I wonder? I would’nt care to name her, buuut…

According to the “Supplementary Information:”

Our temperature proxy network (Fig. S13) was drawn from a broader Australasian domain: 90°E – 140°W, 10°N – 80°S (details provided in Neukom and Gergis 46). This proxy network showed optimal response to Australasian temperatures over the SONDJF period, and contains the austral tree – ring growing season during the spring-summer months.”

Here is citation 46:

Neukom, R. & Gergis, J. Southern Hemisphere high-resolution palaeoclimate records of the last 2000 years. The Holocene 22, 501-524 (2012)

Forms of the word “teleconnection” appear 17 times in this Holocene article. Record?

Another part of the exaggeration involves the careful choice of the of the period for calculating the standard deviation. I’ve never understood using anything but the entire record for calculating the standard deviation, and this bastardized procedure shows why.

If (as in the case of Australasia) the dataset goes up or down significantly at the start or the finish, then using a shorter baseline, like say the 1200-1965 they used, just exaggerates these excursions. That way, you end up with the four+ standard deviations of the transformed Australasian data. Bad statisticians … no cookies.

As always, Steve, good work.

w.

S. McI.: “…a super-sophisticated statistical methodology described in the caption as ‘scaled visually to match the standardized values over the instrumental period.’” A “super-sophisticated statistical methodology”: Ouch! That smarts! Back in the old days of CA we used to get quite a bit of the acerbic wit, but nowadays not so much–so it seems to me. Frankly, I like this when we do get it, as it brightens my day. In terms of total acerbity, I am ranking this one up there with Mark Steyn’s Mann-discriptor, “leader of the tree-ring circus”. Well, I had better stop now, as I am wandering further and further off-topic.

Rumor has it they moved on from the Mark 1 eyeball style of adjustment and are now using the latest version, Mark 1.1 beta. The extra digit after the decimal indicates the number of eyes open while adjusting.

It’s certainly opened my eyes.

A brighter iris gleams upon the burnished statistic.

=========

Wodehouse or Tennyson? No, kim.

“In the spring, Jeeves, a livelier iris gleams upon the burnished dove.”

http://madameulalie.org/strand/Jeeves_in_the_Spring_Time.html

Do the publishers of these papers ever ask a qualified statistician to review them? What the hell is going on in this field?

They are more into the art than the craft.

No statisticians were involved in this process.

Well I’d like to ask again is this really the same as the original Gergis, Karoly study?

Steve points out in this post that it has changed a bit.

Here is a quote from his discussion section from this post.

“The Gergis reconstruction in PAGES2K uses an almost identical network to the retracted article: of the 27 proxies in the original network, 20 are used in the P2K network: out are 1 tree ring, 2 ice core and 4 coral. The new network has 28 proxies: in are 2 tree ring, 1 speleo and 5 coral records. As discussed in my original commentary, there are only two records in this network going back to the MWP – both tree ring records considered in Mann and Jones 2003 without much centennial variability”

Does the methods section demand squinting during the visual inspection process? It could affect the confidence intervals of that step.

These are penumbras and emanations which are only discerned by looking into the boiling caldron while chanting “double, double toil and trouble”. Squinting helps. Sometimes crossing the eyes.

Is there a connection between “eye of newt” and “scaled visually to match the standardized values over the instrumental period”? Sometimes these things are staring you in the face. Unfortunately.

As I understand their procedure, they first calculated 30-year averages for each reconstruction (ending in 2000) ; then they converted the 30-year average series to SD units (basis 1200-1965) and then calculated an area average – shown in their Figure 4b (and SI Figure 5) as blue dots – see below. This was then converted to temperature (see scale on right axis) by a super-sophisticated statistical methodology described in the caption as “scaled visually to match the standardized values over the instrumental period.”

OK so I’m a mere junior at this whole statistics thing but I seem to recall that smoothing should always be the last step becaise otherwise you get all sorts of errors, spurious correlations etc etc. In fact isn’t this specifically warned against in numerous stats textbooks and the like.

Excuse my ignorance but could someone say in a line what is an “SD Unit”? I’m guessing that there is a linear relationship between SD units and deg C – but that’s just a guess.

Martin Posted Nov 8, 2014 at 3:43 AM

Standard deviations. The dataset is “normalized” or “standardized” by subtracting the mean and dividing by the standard deviation.

w.

SD = Standard Deviation. And, yes, there is a linear relationship but it will be different for each area depending on the variation in each data-set.

By the way it is not even clear that it is appropriate to use SD units at all. While a SD can be calculated for any dataset it is really only meaningful for normally distributed data, and climate data are often not normally distributed.

Technically tty, standard deviations can be meaningful for any data set. The reason is there are different ways of calculating standard deviations. The one most widely known one (and likely the only one most people will ever see) does assume the data has a normal distributions. Others don’t though.

It does actually matter sometimes in climate discussions because so much of the data used in climate science has non-normal distributions.

Wikipedia has a discussion of standard deviation and various types of random variables

http://en.wikipedia.org/wiki/Standard_deviation

But what sort of super sophisticated statistical deviation could they achieve by simply adding-

“Eye of newt, and toe of frog,

Wool of bat, and tongue of dog,

Adder’s fork, and blind-worm’s sting,

Lizard’s leg, and howlet’s wing,” ??

If only these people could get out of their rut and envision the boundless possibilities.

Standard deviation is not connected with the normal distribution, or any other for that matter. It is simply the square root of the sum of squares of deviations from the mean of a sample or a population, divided by the number of observations (or, if one is estimating the standard deviation of the underlying population from a sample of the population, by one less than the number of samples). The normal distribution comes into the scene if one attempts to calculate inferential statistics, such as confidence intervals, from the SD. In this event a distribution and its statistical properties must be invoked. As has been said above, this is in most cases (and generally by default) assumed to be the normal distribution.

snip

Steve: sorry, no more discussion of standard deviations.

“Visual Scaling” is probably a good technique for a landscape painter, or maybe

a landscaper. In a science paper, not so much.

You don’t understand. The authors have a cumulative total of 31 years of eyeballing curves of temperature proxy data. It is a science in itself.

Angles of perspective, sines, cosines, tangents, these all play a part. Do not presume to correct the experts. And, ha!, fat chance on FOI.

I guess if you “visually scale” something using the Eye of Sauron, then it will look hot.

When Steve posted on the Gergis paper on October 30, 2012, it was “in review” at JoC. Gergis’ cv still lists it as in review, that’s three Halloweens in zombie guise.

That really is scary.

Great work, as always, Steve.

All we need now for perfection is Nick (Stokes) to join the discussion in an attempt to defend the indefensible 🙂

Nicky is ironing out his briefs. He’ll be along.

The Gergis paper has disappeared but some vestigial evidence of its existence and its problems still exist.

http://newsroom.melbourne.edu/studio/ep-149

Scientific study resubmitted.

An issue has been identified in the processing of the data used in the study, “Evidence of unusual late 20th century warming from an Australasian temperature reconstruction spanning the last millennium” by Joelle Gergis, Raphael Neukom, Ailie Gallant, Steven Phipps and David Karoly, accepted for publication in the Journal of Climate.

The manuscript has been re-submitted to the Journal of Climate and is being reviewed again.

I followed up with Melbourne and with the Journal but it just got “no comment”.

A “disappeared” paper that was never honestly defended or accounted for is no barrier to high awards for climate scientists:

the “Oscars of Australian Science”

They don’t acknowledge critical flaws, they merely plunge onward pretending that nothing is wrong:

Gergis team wins 2014 Eureka Prize

[emphasis added]

[emphasis added]

That news from Down Under made me leap out of by bath in the old country.

“The team has also shown that during the 1997-2009 drought the Murray River ran at a 1500-year low”

Did it???

http://www.cv.vic.gov.au/stories/drought-stories/11011/koondrook-in-the-bed-of-the-murray-drought-1915/

Gergis still shows the paper as under review. In the most recent iteration, the authors are shown as Gergis, Neukom, Gallant and Karoly withe PAGES AUS2k project members being dropped from the masthead.

The discussion of the Australasian network in PAGES2K is taken nearly verbatim from Gergis et al (under review), but this article under review was not cited.

I’d be surprised if Nature’s policies permit such self-plagiarism.

re the “self-plagiarism”

Either reckless and unethical, or perhaps a sign that the previous “under review” paper is never going to be published, i.e., one can presumably utilize passages of one’s previously UN-published work in a future work to be reviewed separately? But recycling exact text without quotation and attribution must be a big no-no for responsible scholarship. There are certainly academic authors known for perpetual recycling of their basic ideas and arguments, but however controversial that can be it is quite different from literal reproduction.

Also, the potential (lack of) ethics and (im)propriety of continuing the “under review” charade would be a distinct issue, so after 2 1/2 years is the Gergis, Neukom, Gallant, and Karoly manuscript, which was originally announced as “published” with great fanfare, TRULY “under review” or not? …..

Related to the “Eureka Prize” … did the prize competition rely upon un-published materials, or how would the “1000 years” claim be substantiated in scientific terms, in time for that prize decision?? This is asserted to be the top prize in Australian science, according to the Gergis website claim…. although I’d think that serious scientists should be embarrassed to associate their work with “Oscars” type prizes. Put that down as another oddity of Cli-Sci(TM)….

No doubt Deep Climate and John Mashey are on the case. /sarc

“Visual Scaling” (AKA “Looks good to me!”) Invented in a bar somewhere after a long night of pounding beers. Can be applied to all sorts of problems.

Yes, “beer-goggling” now may have an application to climate science.

The weighted average is suspect. The Supplementary info at page 33 gives “Australasia is herein defined as the land and ocean areas of the Indo-Pacific and Southern Oceans bounded by 110°E-180°E, 0°-50°S.”

The paper at Table 1 gives the Australasian land plus sea area as 37.9 million sq km, which agrees with those lats and longs.

However, the total land area of Australia and New Zealand is about 8 million sq km. Most of the proxies are from land or close to it.

But, for the area of influence of the proxies, the authors enlarge to the map quantities of 90°E-140°W, 10°N-80°S which is an area of about 140 million sq km including parts of the Northern Hemisphere up to the mid Philippines and a fair part of Antarctica, treated separately.

What is conventionally known as Australasia represents a small part of both map areas and hence the figure used for the weighted average is not well chosen, being inconsistent for example with the land:sea ratio of the North America and Antarctic study areas. This seems to be another way to enhance the small blade in the final calculations.

The Australasia reconstruction also fails to find the high instrumental temperatures of the mid-late 1800s and the Federation drought period of 1900 +/- a few years. These are not documented over the whole of Australasia but the known effects should show in a decent reconstruction. Plausibly, they were likely to be close to present temperatures. If they do not show, then what faith can be put in anomalies (or lack thereof) within pre-instrumental data?

There are several other examples in the paper of ‘favourable’ choice of data. Dispassion lacks.

GS,

For the Pages2k “Australasia” proxies there are only two North of the equator. About three degrees north is Aus_23 Bunaken, a small island off the tip of Sulawesi, Indonesia. This is the sole proxy in the set that might be argues as “-asian.” The Palmyra proxy is at about 6 degrees north, although the Pages2k data incorrectly indicates it as 6 degrees south.

Very strange is the extension to 80°S. The southernmost proxy is 47°S: Aus_15 Stewart Island, NZ. Then why the expansion from 50°S to 80°S ? That is a big piece of water to swallow.

“Our temperature proxy network (Fig. S13) was drawn from a broader Australasian domain: 90°E – 140°W, 10°N – 80°S (details provided in Neukom and Gergis 46).”

Deciphering this statement has caused me difficulty (e.g. what “details?”) The N&K article included Antarctic data (as well as SAmer and African) because it dealt with the whole S hemisphere. But in no way did it define “Australasian” as to include the Antarctic or any part of it.

Note also the furthest west Pages2k “Aus” proxy is Rarotonga at 160°W. Then why the extension to 140°W ?

The target area and proxy area are carried forward from Gergis et al 2012 – you need to consult it. Gergis et al 2012 included several Antarctic ice cores (Vostok, Law Dome) in the Gergis 2012 network as teleconnection predictors. These proxies are dropped from the PAGES2K – I don’t know whether they fell out geographically or whether they were screened out.

Well, maybe it is a small fish in this matter, but I will point out loud that the Pages2k citation to the Neukom and Gergis 2012 Holocene article is probably no mere mistake, but maybe a bit of hocus pocus to cite something, anything other than the withdrawn Gergis et al. 2012 – the true best prior authority.

I wish to trace some clerical and reading errors in the Gergis et al. product, and their later history of correction, which I think reconfirm the withdrawn paper as the ancestor to Pages2k Aus.

Gergis et al. 2012 says:

This study presents the first multi-proxy warm season (September–February) temperature reconstruction for the combined land and oceanic region of Australasia (0ºS–50ºS, 110ºE–180ºE).

The proxy coordinates as recited in Gergis et al. all fit within these parameters. But a problem is several are entered wrong, they lay on the other side of the equator and/or 180th meridian. Bunaken is 3ºN, not 3ºS. Palmyra is 6ºN-162ºW, not 6ºS-162ºE. Rarotonga is 160ºW, not 160ºE. In the Pages2k metadata this is mostly corrected. Bunaken is put north of the equator, Rarotonga is corrected too. Palmyra is corrected for longitude, but not for latitude (probably just a second clerical error). These mistakes are the reason why the Pages2k domain is extended to 10ºN and 140ºW. In the time between the creations of Gergis et al. (2012) and Pages2k Consortium (2013), somebody noticed that the Bunaken, Palmyra coordinates were erroneously entered. The corrections themselves are evidence of the relationship between Gergis et al. and Pages2k Aus.

Then there are the Antarctic proxies in Gergis et al. The Vostok coordinates are listed as 78ºS. This is correct. However, the Gergis et al. “oceanic region of Australasia” stretches “0ºS–50ºS.” (Stewart Island, NZ is recorded at “47ºS”) There is nothing in the main text of Gergis et al. arguing for or addressing why anything Antarctic should be considered Australasian. Yet Antarctic proxies are mysteriously listed at, “Table 1. Proxy data network used in the Australasian SONDJF temperature reconstruction.”

So, at points of time between the publication of Gergis et al. (2012) and its descendant Pages2k (2013), incorrect proxy coordinate data was corrected and the domain description was extended north, south, and east. At a later point it was decided to exclude the Gergis et al. Antarctic data from Pages2k Aus. However, reverting the “80ºS” back to an appropriate “50ºS” was overlooked. The only reason why Pages2k could show “80ºS” is such is a relic of handling and editing the Gergis et al. product. Thus, a mere domain description ties Pages2k Aus to Gergis et al tightly, and not at all to Neukom and Gergis.

As follows are the first two paragraphs of the Australasia section of the Pages2k (2013) Supplement, page 33:

Proxy data and reconstruction target Australasia is herein defined as the land and ocean areas of the Indo-Pacific and Southern Oceans bounded by 110°E-180°E, 0°-50°S. [GS pointed this out before. It is also the designated Gergis et al. domain] Our instrumental target was calculated as the September-February (SONDJF) spatial mean of the HadCRUT3v 5°x5° monthly combined land and ocean temperature grid

9,54 for the Australasian domain over the 1900-2009 period.

Our temperature proxy network (Fig.S13)was drawn from a broader Australasian domain: 90°E-140°W, 10°N-80°S (details provided in Neukom

and Gergis [fn.]46). This proxy network showed optimal response to Australasian temperatures over the SONDJF period, and contains the austral tree-ring growing season during the spring-summer months.

I believe this confusing passage may be the product of a scrivener who her/himself confused by the whole matter, which is indeed confusing. The clerical and reading errors in the Gergis et al. product I can see as nothing as mere mistakes. The attempts to correct these errors is clearly another line of evidence tying Pages2k Aus to Gergis et al. I do not guffaw at minor errors, but there sure a lot of them here. However, I tend to believe the implication of Neukom and Gergis (2012) as the authority for the Pages2k Aus work is no error at all. Its citation was meant to cover the tracks between Pages 2k and the withdrawn Gergis et al. article.

Steve: I don’t think that anyone contested that Gergis et al 2012 was the origin of the PAGES2K AUS reconstruction. You are right to point out that their citation to neukom and gergis 2012 is misleading.

Also your chronology is gilding the lily a bit. The more expansive region for the proxy network was already presented in Gergis et al 2012 and was not new in PAGES2K. Gergis et al 2012 stated: “Our temperature proxy network was drawn from a broader Australasian domain (90oE–140o W, 10oN–80oS) containing 62 monthly–annually resolved climate proxies from approximately 50 sites (see details provided in Neukom and Gergis, 2011).” The table of coordinates in Gergis et al 2012, as you observe, contains a number of errors, mostly arising from E and W, N and S being treated as the same (all S and E). However, their location map of Gergis et al 2012 (Figure 1) shows the sites in correct locations – even Palmyra is shown N of equator. But here’s something odd from the location maps. You say: “Palmyra is corrected [by PAGES2K] for longitude, but not for latitude (probably just a second clerical error).” In fact, in the location maps, Palmyra is shown in correct location in G12, with the error being introduced in PAGES2K.

“The more expansive region for the proxy network was already presented in Gergis et al 2012”

Gosh, I missed that in the main text. It’s confusing..and the “target” vs. expanded area is not explained in the figure 1 caption…which I should have analyzed closer among all the “target area” business. I’ll shift blame to a prior grogginess induced by too much time trying to match something from Pages2k to Neukom & Gergis..

Regarding Palmyra, yes, the G et al 2012 map places it the right place. This is a very confusing area for review, caused by the inconsistencies in the products themselves. But I contend the Palmyra erroneous data error still comes from Gergis et al. I should have specified Table 1 more. At Table 1 the Palmyra coordinates are 162 and 6. In the Pages2k Metadata the Palmyra coordinates are -6 and -162. So, on account of me sayin’ both coordinates are wrong, it would seem they were both corrected. Or the reverse. However…Gergis et al. uses °S while Pages2k uses °N. So while latitude “6” is error for Gergis, latitude “6” would be correct for Pages2k. However, Pages2k shows “-6.” Of course, this does nothing to explain how the perhaps bigger issue of how the Gergis et al. map shows Palmyra in its correct location when the “data” in the same paper puts it very far away.

Another quirk is in Supplementary “Figure S1 Comparison between the area-weighted mean annual temperature within the PAGES 2k regional domains as plotted in Figure 1 (land and ocean) and global mean annual temperature. All data are from HadCRUT4 (ref. 9).”

The global ‘measured’ temperature range is from -0.6 to + 0.5 deg C, for a span of 1.1 deg.

The average of the study domains is from -.08 to +0.8 for a larger span of 1.6 deg C.

In the study domains, compared to instrumental global, the past has been cooled and the present warmed resulting in a stronger trend. This is a pattern seen elsewhere.

Sorry, replace -.08 with -0.8 in 7.37 PM post.

There is extensive use of the correlation coefficient, r, as well as the sometimes related r^2 in the original Neukom et al paper and in the SI for the present paper. There is also the note that “Proxy records with values that scale negatively with temperature (as listed in Database S1) were inverted, then all records were standardized to have zero mean and unit variance relative to the period of common overlap for all records within a region” in reference to the alternative reconstructions. Then the note that (for Australasia) “To avoid variance biases due to the decreasing number of predictors back in time, the reconstructions of each model were scaled to the variance of the instrumental target over the 1921-1990 period.”

Is it possible that one or more of these 3 these procedures produces a narrower range of correlation coefficients that are all positive, rather than a more usual wider range from negative to positive

The proxy data were correlated against the grid cells of the target (HadCRUT3v SONDJF average).

Given that several of the proxies are below 10 degrees from the Equator, where seasonality has a rather different form than in mid-latitude zones, why is this done, for example, for corals?

Australian science is not often backward, so one might ask why the “Australasian” reconstruction includes no study from the Australian mainland (that is, excluding the tree rings from Tasmania and the couple of offshore coral locations (Abrolhos Is & Ningaloo Reef).

A cynic might argue that good quality work has been done, but that the results were not favourable to the cause.

Geoff says:

Now that you point it out, the lack of Australian mainland proxies is quite startling. In the Neukom and Gergis 2012 listing, they include tree ring series (Callistris) from Western Australia and Northern Territories, but both are screened out in both Gergis et al 2012 and Gergis2K. PAGES2K did include one Victoria tree ring series that had been screened out in Gerg 2012 – Baw Baw, Victoria from Brookhouse.

Am I missing something here? “SD units” aren’t valid to average together when they come from two totally different series, are they?

Otherwise, I could say

Series 1: 0.1, 0.1, 0.1, 0.1, 0.1, 10 => OMG HUGE hockey stick in SD Units

Series 2: 10000, 10100, 10200, 10100, 10300 => Basically no change in SD units

Average the two together => huge change in “SD Units”

“Visually convert it back to the original series” whatever that means.

Voila, huge hockey stick despite the fact that nothing has happened, as the changes in the first series are actually wholly negligible compared to the second.

Using SD units in that way is a common practice at all levels of the multiproxy analyses of the CPS type. When no good alternative method is available, it’s a natural choice. It has severe weaknesses, but it may still be the best of the alternatives.

What’s done in the PAGES2K paper on Continental-scale temperature variability during the past two millennia is perhaps a logical extension of that approach, but in this case it’s certainly possible to argue that it would be better to use regional scaling without the additional steps of reintroducing SD weights (multiplied by the area) again for each region and scaling once more by comparison with the instrumental GMST.

The final outcome can probably be interpreted also as a single CPS composition where the weights of each proxy are determined by a complex and rather obscure process. Perhaps someone will go through the process and list those weights. There might be some rather interesting cases in such a listing.

Why is my objection invalid, then?

It seems to me that your objection is exactly the same one that McIntyre made in the post itself: this is a way to overweight Gergis which has a small amplitude and a large SD, thereby yielding a world-wide hockey stick.

Well, if you’re right, don’t the objections go beyond what happens in this one particular case? I mean, I could take a totally constant series, arbitrarily divide it into two parts such that one of them has a huge change in “SD units”, and then average the “SD units” and get a hockey stick (or whatever other result I wanted).

Why is there any validity to this?

I expect that most of us readers of climateaudit agree with you.

Fair enough. I guess what I’m trying to get isn’t gonna happen here. I’m looking for either a “no that’s not at all what they did and here’s why” or a “yes, that’s what they did and it’s as bad as you think”.

If what they did is what it seems to me like they did, it could not have less validity, and it is totally pointless to argue about whether the results “hold”. This method could happily average together a series with no net temperature change and a series with 0.1 degree temperature change and come out with 10 degrees of temperature change. The graph thus produced is wholly meaningless and it should be replaced by one featuring a genuine averaging of the actual temperature changes. Anybody done that exercise?

Again I realize I am a total ignoramus on these issues.

Pekka, you and I have both spent time at And Then There’s Physics. There or elsewhere you must have seen repeated claims that “There have been a dozen global reconstructions and every single one shows a hockey stick. There has never been one that doesn’t.”

But I have certainly been getting an impression from recent posts here that one could very easily make a reconstruction that shows no hockey stick by making slightly different choices of methodology at various points along the way.

Aside from detail questions about the exact choices made, do you find it as concerning as I do that paleo scientists have flipped a coin a dozen times and gotten heads every time? (If that’s an accurate description.)

Of course you get an impression. That is the whole point for climateballer McIntyre. To introduce some impression for the congregation.

Guess why the chief climatballer will not produce that reconstruction with no hockey stick? Just by making slightly different choices along the way.

Is it because he doesn’t know what a hockeystick is? The HSI-blunder.

ehak, do you actually read the posts you comment on? McIntyre produced one just two posts ago: https://climateaudit.org/2014/10/28/warmest-since-uh-the-medieval-warm-period/

And if you will read this post here, you will see that he just produced another. Just don’t do the rescaling that the PAGES people did, and no hockey stick. Why is this difficult?

Ehak off the bench turns the ball over with a fumble.

=============

ehak

Your one-line comments obviously mean something to you, and probably to the community at sites where you usually hang out. However they are baffling to the rest of us who have no idea what you are talking about. Willard’s posts suffer the same problem.

So, instead of just saying “Is it because he doesn’t know what a hockeystick is” – which seems unlikely given Steve’s involvement in this story – why don’t you expand the point and give your preferred definition? People here may may not agree, but at least both sides will understand any points of disagreement.

In that case just drop it and don’t use it.

Exactly

“Severe weaknesses” is what this post is all about (and the preceding post).

One Trackback

[…] https://climateaudit.org/2014/11/07/gergis-and-the-pages2k-regional-average/#more-20199 […]