The PAGES2K Arctic reconstruction uses Gifford Miller’s Hvitavatn (Iceland) data upside down. The error “matters” because this series is one of rather few PAGES2K series that show a Hockey Stick. Such gross errors ought to be corrected before the data is cited for policy purposes or said to confirm previous studies.

PAGES2k, the lead author of which is Darrell Kaufman, a longtime promoter of varvology, expands the Kaufman et al 2009 sediment network to 22 series, adding a number of new series, including Hvitarvatn, Iceland, where Kaufman used the data upside down to the interpretation of Gifford Miller, the original author and a very eminent paleoclimatologist. Miller’s report on Hvitarvatn was previously discussed at CA here.

Here is a plot of the first eight PAGES2K Arctic sediment series (log basis). WIthin these eight series, the series on the right second from the top, Hvitarvatn (Larsen 2011) is the only one showing a dramatic increase in 20th century values relative to medieval values.

Figure 1. First eight Arctic sediment proxies(log scale). Hvitarvatn is the “Larsen2010” series on right side second from top.

Hvitarvatn was also discussed at length in Miller et al 2012, an interesting article previously discussed at CA here. In that blog post, I presented the graphic of Hvitavatn varve thicknesses from Miller et al, as shown below. Miller interpreted the increased varve thicknesses at Hvitavatn after 1275, together with radiocarbon information on kill dates at Baffin Island, as demonstrating the development of LIA cold and the narrow MWP varves as evidence that ice caps were not in close proximity. Miller stated:

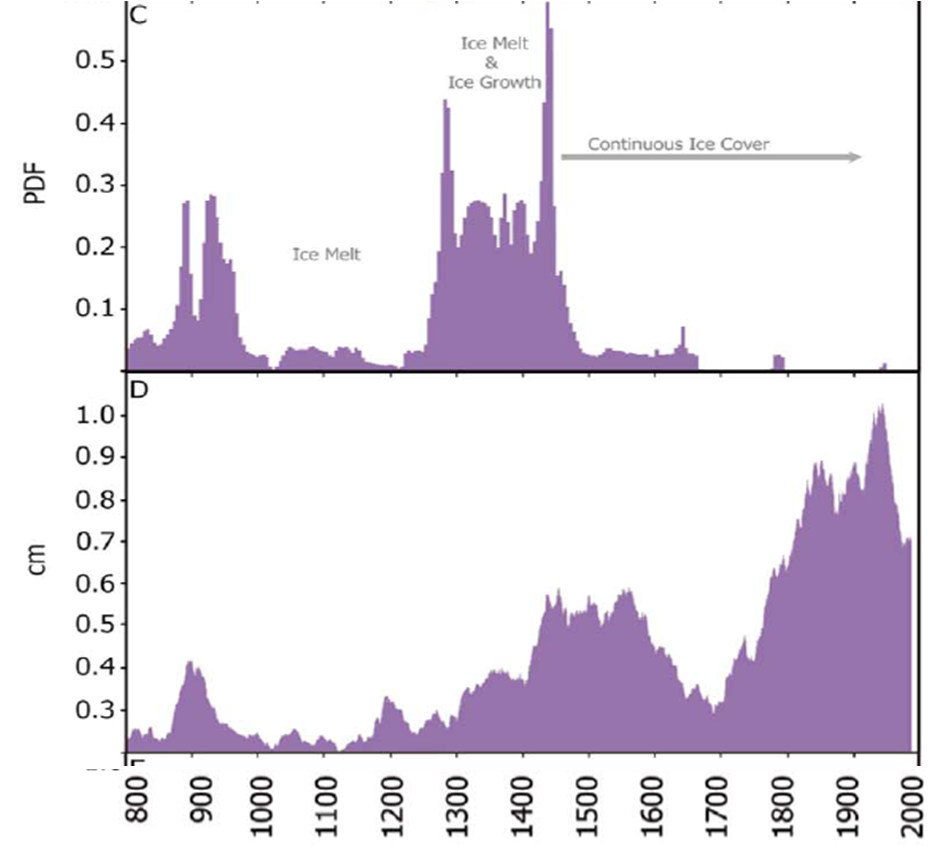

Here we present precisely dated records of ice-cap growth from Arctic Canada and Iceland showing that LIA summer cold and ice growth began abruptly between 1275 and 1300 AD, followed by a substantial intensification 1430–1455 AD.

From Miller et al 2012 Figure 2C-D. (c) Ice cap expansion dates based on a composite of 94 Arctic Canada calibrated 14C PDFs. (d) 30-year running mean varve thickness in Hvítárvatn sediment core HVT03-2 [Larsen et al., 2011].

Miller et al discussed varve changes at Hvitavatn at length, including the following:

Thus, supra-decadal changes in Hvítárvatn varve thickness track the intensity of Langjökull erosion, and serve as a proxy for ice-cap size [ Larsen et al., 2011]. The response time of Langjökull outlet glaciers to abrupt summer cooling is approximately a decade and the estimated ice-cap equilibration time is 100 years (H. Björnsson, unpublished data, 1997- 2011).

Consequently, Langjökull outlet glaciers will begin to advance within a decade following abrupt summer cooling, although the ice cap will not attain its new equilibrium dimensions for a century. We therefore expect that times of abrupt snowline lowering derived from the Baffin Island kill dates should correspond with the onset of multidecadal trends of increasing varve thickness in Hvítárvatn. To test this prediction we analyzed replicate varved sediment cores from Hvítárvatn, where the past 1200 years is contained in the upper 8 m of the sediment fill. The varve chronology since 800 AD is constrained by seven historically dated tephras, providing 6 year temporal precision [ Larsen et al. , 2011]. The 30-year running mean varve thickness integrates the interannual to decadal variations in hydrologic efficiency of the delivery systems and tracks the evolution of Langjökull’s growth and decay in response to summer temperature changes over the past 1200 years..

From both the Canadian evidence (many sites became ice-covered in the late 13th Century and remained so until the past decade) and Icelandic evidence (consistently thick varves following the late 13th Century), we can conclude that multidecadal average summer temperatures never returned to those of Medieval times until the 20th Century…

The expansion and subsequent retreat of Langjökull recorded by a peak in varve thickness between 850 and 950 AD also coincides with ice-cap growth in Arctic Canada. This is followed by two centuries of unusually thin varves between 950 and 1170 AD, indicative of reduced glacial erosion in response to increased summer warmth. This suggests that the lack of ice-kill dates on Baffin Island during the same interval is the result of ice-melt rather than extended cold, a finding that could not be determined from the dated vegetation record alone.

Turning now to the PAGES use of this record. The PAGES2K SI says that its proxy records must “exhibit a documented temperature signal” and be “published in peer reviewed literature as a proxy for temperature”:

The proxy records selected by the Arctic2k group for the Arctic continental-scale temperature reconstruction (Fig. S7) meet the following criteria: (1) situated north of 60°N, (2) extend back in time to at least 1500 CE, (3) have an average sample resolution of no coarser than 50 years, (4) include at least one chronological reference point every 500 years, (5) exhibit a documented temperature signal, and (6) are published in peer-reviewed literature as a proxy for temperature, although not necessarily calibrated to temperature (i. e., some records provide only a relative measure of temperature with unknown transformations between the proxy measurement and temperature).

Unfortunately, they did not also require that the use of the record be in the same orientation as the published literature and, in the case of Hvitavatn, the SI states that they used the record in a positive orientation i.e. opposite to the interpretation of Miller et al 2012.

PAGES2K also used a number of Baffin Island varve series. In my previous post on Miller et al 2012, I observed that the varve data on Baffin Island lent itself to the interpretation that Miller had placed on the Hvitavatn, Iceland data – i.e. thick varves indicating glacier proximity, an interpretation of varves that is consistent with interpretations of Ice Age recession.

BTW I strongly commend Kaufman for actually reporting the orientation of each proxy. Previous authors e.g. Neukom et al 2011 have failed to do so, enabling Neukon’s upside down use of Quelccaya data to remain undetected until I noticed it the other day because of PAGES2K disclosure.

Readers should not conclude that Miller has argued that MWP warmth exceeded modern warmth. Miller has argued that recent Arctic glacier melt is exposing sites that were ice covered through the MWP:

The 24 Canadian sites that became ice-covered 800 – 900 AD (Table S4) and did not melt again until the past decade demonstrate that multi-decadal

average summer temperatures in Arctic Canada now exceed those of Medieval times.

This is a line of argument that, in my opinion, might well be used to argue that modern warmth has surpassed MWP warmth. Assembling such facts would be far more persuasive to me than multiproxy varvology with upside down data.

39 Comments

“This is a line of argument that, in my opinion, might well be used to argue that modern warmth has surpassed MWP warmth.”

In the Arctic perhaps.

the problem is that many such studies were already done and the general trend is that MWP was warmer or much warmer than today (the tree line in Quebec, permafrost extent and tree line in Russia, etc).

completely agree Ivan

as a layman the tree line studies (or any plants for that matter,tree stands are the most studied for paleo I think)are(or should be)the obvious first indicators of past v present global climate change.

do that first & then get into the nitty gritty of the ring data if you think it will add or clarify the data.

ps – Steve has various past posts on this topic but they stop around 2007, I think he then just gave up trying to make this point (my opinion only):-)

I’m so tired of this kind of nonsense coming up over and over again. Here’s my rule of thumb:

You can’t do a multiproxy study without first intensively studying each proxy.

You’d think, after the epic multiproxy failures in the past, that people would have learned to do just that … but no, here we are again dealing with fools who just grab a bunch of proxies and throw them in the computer hopper for processing. At least these guys listed the orientation, but still …

Ah, well. I take refuge in the fact that the PAGES2K study has provided support for a mathematical theory of mine, which is that

where C is a constant, A is the number of named authors in a given study, and SV is the study’s actual value …

w.

We can even name Willis’ law: the law of diminished personal accountability (or dilution of accountability).

“fools who just grab a bunch of proxies and throw them in the computer hopper for processing.”

That’s hardly the case here. In the Miller paper cited here, Darrell Kaufman is thanked in the acknowledgements. He and Miller have been co-authoring papers since at least 1990, including the Science 22 series one mentioned here.

It’s quite possible there is an error in the SI table.

Steve: Racehorse, Kaufman made multiple errors in Kaufman et al 2009. WHy wouldn’t he make similar errors in 2013? Stay tuned for a real beauty. BTW if Kaufman did invert Hvitavatn, why wouldn’t he also invert the Baffin Island series?

The real problem is Kaufmannian varvology for which WIllis’ description is reasonably apt.

Willis, equally one cannot begin a study of mutli-procy based reconstructions without a completely constrained selection criteria for the selection and non-selection of proxies. All proxies that conform to the selection criteria have to be included and those that do not meet the stringent criteria are rejected. Moreover, there needs to be an a prior archiving of the selection criteria.

It seems scientists can easily sidestep the idea of ‘first intensively studying each proxy’ because they can simply point to the original author as having done all that work. The reasoning goes:

1. Does original author/originator declare it to be a proxy?

2. Does proxy pass peer-review and thus appear in the literature?

…passing those two hurdles, voila!

But why not? Unless you are going to publish specifically against (if that’s the right word) individual proxies such that the literature can be populated with divergent views of certain ones, why shouldn’t these author groups be able to ‘get away’ with assembling a multi-proxy study using a review of the literature? Why even bother having ‘the literature’ in that case?

Now, when someone distorts/misreads the existing literature and the original proxy authors in the course of assembling a multi-proxy study, that is a problem. It’s a problem you hope is caught itself by peer review, but often that system is fallible enough to not have a chance because it’s not an ‘audit’ per se. I guess the question becomes, what to do within the literature itself such that it reflects the results of such audits should it stand (is this where ‘comments’ come in?) If the literature is unchanged, these problems are easily repeated by folks who are just going by what’s in the literature.

Salamano – I always enjoy reading your posts.

It is worth pointing out that even when someone like Steve points out there is an issue and does so via a published response it is still ignored.

I have argued previously that it cannot be reasonable for large teams of researchers to be continually using data which none of them understand.

http://amac1.blogspot.co.uk/2011/06/tiljander-data-series-appear-again-this.html

In particular, this reminds me of the oft-quoted statement held over at RealClimate (http://www.realclimate.org/index.php/archives/2005/02/dummies-guide-to-the-latest-hockey-stick-controversy/)

“Yes. Their argument since the beginning has essentially not been about methodological issues at all, but about ‘source data’ issues. Particular concerns with the “bristlecone pine” data were addressed in the followup paper MBH99 but the fact remains that including these data improves the statistical validation over the 19th Century period and they therefore should be included.”

A possible conclusion is that the contamination of this bristlecone data (that does exist in the literature) has actually EXPOSED an even truer climate signal within this type of tree, yes? It’s fascinating that the science have matured to the point where they can determine the orientation of contaminants, much less the host proxy.

Salamano – “A possible conclusion” but for me a wrong one.

I will fall back onto poor quality control leading to bad data that is not trapped by the subsequent processing. The bad data is mishandled differently in the modern period and which in turn can lead to biased misleading results by enhancing the blade and attenuating the shaft.

Well I was being a bit cheeky with that last paragraph there, but I will say that the argument of “poor processing” or “poor quality control” is being sidestepped by the (a) better validation statistics when using the poor data, compounded by (b) the weaker validation statistics without it. Apparently there’s something in the rules out there that says if the results are stronger with the data included and weaker without it, it must be included. It doesn’t matter what the issue is in the first place, it has obviously been ironed out through the proxy itself with (in some cases) an even better representation of the climate signal as a result.

This particular round-robin volley can continue forever, or there’s got to be some sort of new approach. It’s going to take time to whittle away bad individual proxies from the literature (because they’re in there already). It is like beating back zombies.

Salamano – I know it is an approach of yours to paraphrase someone elses argument in a devils advocate sort of way.

re “Apparently there’s something in the rules out there that says if the results are stronger with the data included and weaker without it, it must be included.”

Personally I would disagree with that but I would also need to understand who exactly is using that argument and in what context.

Steve: Wahl and AMmann used that argument using the RE statistic in favor of including bristlecones. I’ve never seen the argument by a real statistician or in any context other than WA’s effort to launder MBH.

The above quote from Gavin Schmidt (in my mind) is where that argument is being made, and the link provides the context.

Salamano – ok – yes an absolutely rubbish argument from Gavin Scmidt.

“You’d think, after the epic multiproxy failures in the past, that people would have learned to do just that”

Failures? They have all been uncritically accepted by the idealogically- driven climate psientists who inform the opinion-makers (art and humanities graduates) who influence and actively lobby the politicians (greedy art and humanities graduates) who make policy.

What they have learned is that if you keep telling the same lie, people will believe you.

Willis says ‘yer can’t do a multi proxy study w/out first

intensively studying each proxy. Given Tiljander et al here’s

an overview post at WUWT from yew ter us literary but science

challenged types that can’t read the code.Proxies as the –

ground – beneath – our – feet – fer – long – past – climate –

data, say, how solid or shifting?

A worthy catchphrase, on hearing something unlikely but vaguely plausible.

Does (perhaps) Kaufman reside south of the equator, where everything is known to be upside down?

“This is a line of argument that, in my opinion, might well be used to argue that modern warmth has surpassed MWP warmth. Assembling such facts would be far more persuasive to me than multiproxy varvology with upside down data.”

True, but there is a major problem with using the age of datable remains being uncovered by retreating glaciers as proof of climate change: the inherent lack of unprecedentedness.

While dating the roots of a tree that has just melted out of a glacier as being “X” yerars old is indeed very strong evidence that the climate hasn’t been warmer than now for “X” years, it is also, unfortunately, just as strong evidence that climate was even warmer than now X + a few hundred years ago.

Steve: agreed. 🙂

So then you core the tree which the melting glacier revealed and slap that proxy onto the date where it froze over. A veritable time machine. (if it goes down from there you have some explaining to do – or not)

I’m mystified by the references to which way up the proxies have been used. It seems to me that any proxy for temperature doesn’t have a “right way up”, all it has is a method for reading it as temperature. In other words, saying whether proxies have been used as positive or negative is a nonsense – surely the only way a proxy can be used is via the correct mechanism for that proxy. Otherwise, it just isn’t a proxy.

The problem I’m having with your comment is similar to the problem I’m having with the entire field: How do you know that these things are proxies for temperature? Look at those graphs and tell me with a straight face that you would have chosen them as “a way of measuring temperature” based on the graph. You have got to tell me that you have some a priori information on the way the proxies react to temperature, or these are just worthless worthless.

And if you do (claim to have) some a priori information, then using them upside down from that is wrong. Certainly deciding on which way to use them based on how they fit with your hockey stick is way wrong.

Agreed. I found the wording very difficult, even though it seems such a simple message to get across. Perhaps this would be better: It is not possible to use a proxy “upside down” because it doesn’t have a “right way up”, it only has an algorithm. If you need to say which “way up” a proxy is, then it isn’t a proxy.

It really does appear that none or virtually none of the “proxies” being used in these studies are actually proxies. At best they might be able to give some qualitative clue to temperature, mixed in with other signals (eg. precipitation in the case of trees) but they cannot quantify temperature.

This was Mann’s reply to McIntyre, which is worded in a way that fools other scientists into believing the wrong things about Mann et al and McIntyre, that McIntyre is just ignorant. Mann says allegations of upside-down usage is ‘bizarre’, as regression algorithms are blind to the sign of the proxy. Martin Vermeer tried to defend this as a factual statement. For accuracy, Mann might as well have said, Accusations of upside down usage are bizarre, bananas are green before they are yellow, but that would not have fooled other scientists and blog climate activists into coming to his defense.

Mike Jonas – the reason that a proxy has to have a “right” side up is that there is a physical mechanism ( or hypothesis of one) about WHY it is a proxy for temperature. Without that, one can find all sorts of time series that happen to correlate: stock prices, the number of pirates worldwide, and women’s average skirt length come to mind, to name a positive and a few negatives… 🙂

TerryMN – please see my reply to miker613 above – It is not possible to use a proxy “upside down” because it doesn’t have a “right way up”, it only has an algorithm. If you need to say which “way up” a proxy is, then it isn’t a proxy.

The correlations you cite (stock prices, pirates, skirts), just like tree rings and temperature, can be interesting but they can’t be used to quantify anything. [From historical skirt length and/or pirate data only, identify all the dates on which the Dow Jones index was at, say, 1,000).]

Mike Jonas – With proxy records there usually is an ex ante explanation / hypothesis on how a proxy responds to temperature; ie high latitude or altitude trees are primarily temperature limited, so bigger tree rings equal higher temps, JJA. Or, in the case of some lake varves – thicker sediment means more run-off, caused by more snow in the winter, and so thicker varves equal lower temp DJF…

And yes, there can be and are opposite correlations for each of those proxy classes because of a theory of how a given site, species, etc. responds to (in this case) temperature.

But you need to start with someone who promotes and publishes a theory on why a *given* proxy record at a *given* site acts the way it does, and has gone to the effot of testing and publishing that. You cannot just throw a collection of time series into a hopper (and do a two-sided test to choose correlattion) then declare, ex post facto, that “I really like this, and this, and especially this” without any prior explanation of why a given proxy would act that way – especially when two of the same class, in the same region, may contradict one another, but because “you can’t use them upside down” you’d be able to use both – this becomes an exercise in cherry picking of what is most probably random correlation.

My opinion, ymmv.

TerryMN

A recent relevant paper from Australasia uses DJF seasons for temperature calibration. Yet, in the SH, the main growth spurt for many species is NDJ. Maybe the authors have established that their species add rings in DJF, I don’t know. However, there is typically a simpler, downward temperature curve for DJF, as opposed to sometimes an inverted U in NDJ. Inverted U responses have a difficulty in interpretation as two different temperatures can lead to similar rings, as many have pointed out here before.

This is but one more potentially contentious point to add to the long and growing list.

With all proxy work calibrated against instrumental temperatures, I continue to believe that perhaps the largest source of error is the “adjusted” temperature record itself, especially land ones from USA.

Hi Geoff – I am in complete agreement that trees (in general) have an “upside down U” growth correlation with their preferred amounts of temperature, moisture, and fertilizer. It makes divining just one of the three especially confounding.

Many of the papers frequently look at which temperature point is best correlated to the resulting growth, and then state that the proxy is a proxy for temperature at that time period. Yamal is like this.

MikeN, can you please list 5 or 6 of the many papers that do this? Thanks!

Merging voila methods with Kaufmannian varvology yields:

Kaufmannian Method Voilavarvology (KMV)

Conceptually, KMV operates like an hour glass in that it can theoretically measure the same quantity either upside down or right side up.

Try calling Kaufman this time around.

Steve: What would you like me to talk about? I’m sure that he’s a pleasant enough guy. Should we chat about the NBA playoffs? In 2009, I sent him a cordial and polite email notifying him of problems. He told the Climgategaters that he had received “hate mail”. If he wants to explain why he uses data upside down or uses contaminated data, it makes more sense for him to show why my analysis is wrong and this is best done in writing and not by chit-chat. Any comment by him posted at CA showing why I am wrong would be welcome and given prominent attention.

Out of interest are you the same MikeN who posted here?

http://amac1.blogspot.co.uk/2011/06/voldemorts-question.html

Yea. That particular figure was highlighted on this site as well.

If I remember correctly, he asked you to call him then. Perhaps you could ask why you weren’t named in the corrigendum.

Steve: I have no interest in asking him about corrigendum naming. I attribute it to the pointless pettiness that is too characteristic of realclimate scientists and am content to leave it at that. If Kaufman thinks that I’ve made any incorrect statements in these posts, his comments are welcome here.

Nature Geoscience has just announced a ‘Journal Club’, involving a live discussion of the PAGES2k paper on Google+ on May 9th. See their tweets – they are inviting questions.

Also, they say that the PAGES2k is available free to download to anyone from now until May 10th.

I wonder who will be moderating this discussion?! Also I wonder if anything arising from this live discussion will be taken into consideration by the IPCC? Hmmm … probably not, I seem to recall that the new, improved “rules” specifically exclude both blogs and “social networking” sites as appropriate sources of material for inclusion in IPCC reports.

Btw, I just d/l the pdf version which includes the following notation (p. :

Does anyone happen to know what might have been “corrected”?

Steve:

One Trackback

[…] additional flaws in the statistical underbelly of PAGES2K. However, a few hours ago, Paul Matthews reported, via Climate […]