One of the really annoying things about Wahl and Ammann was their failure to cite our prior analysis of various MBH permutations and, then, having failed to cite these prior analyses, reproaching us for supposedly “omitting” these analyses. For example, in MM05 (EE) we discussed the relative impact of using 2 or 5 covariance PC2 or using correlation PCs; we did so largely because these issues were already in play through our Nature correspondence and realclimate postings, where Mann had raised the same issues. (Wahl and Ammann don’t cite or even acknowledge Mann.)

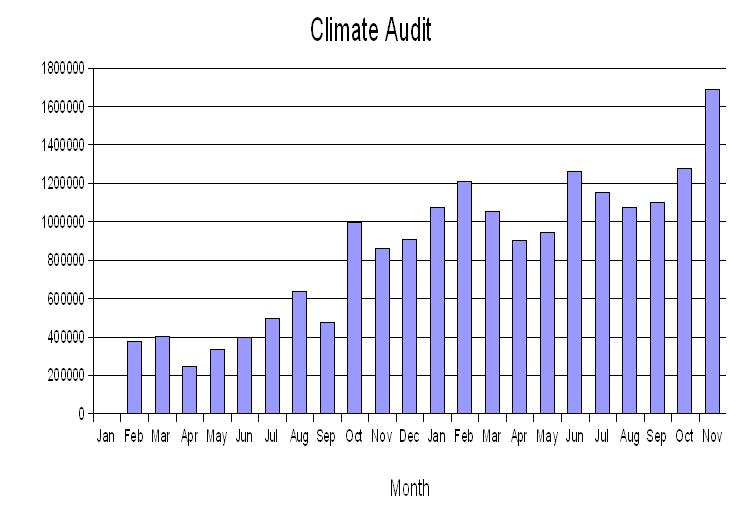

However, when Wahl and Ammann carried out similar analyses, they did not refer to or reconcile to our prior analyses, leaving a highly distorted record. Here’s a graphic that I presented at KNMI, showing the virtual identity of MM05 (EE) results and Wahl and Ammann Scenario 5 results. (I spent several hours with Juckes coauthor Nanne Weber at KNMI and she did not inquire about this graphic.)

Left: Archived results from MM05 (EE) Figure 1. Orange- MBH98 as archived; red — MM05b emulation of MBH98; magenta — using 2 covariance PCs from NOAMER network. All smoothed. In addition, MM05b reported that 15th century results using correlation PCs were “about halfway” between the results with 2 covariance PCs and that “MNH-type” results occur if the network is expanded to 5 PCs. Right – WA benchmark and Scenario 5 cases using MBH98 weights and temperature PCs (after fully reconciling calculations to WA benchmark with no weights and WA version of temperature PCs). Orange — MBH98 as archived; red- WA benchmark varied as described; magenta — using two covariance PCs; blue — one graph with 4 covariance PCs; one graph with 2 correlation PCs.

The differences between the Wahl and Ammann emulation and the MM05b emulation are obviously very slight – apples and apples. The slight difference pertains to how the reconstructed temperature PCs were re-scaled. I discussed this on the blog in May 2005 after Wahl and Ammann released their code. Apples and apples, the reconstructed temperature PCs were identical in the two codes. The difference arises in the re-scaling procedure in MBH98, which is handled a little differently in the two algorithms, accounting for the slight differences. In MM05b, we calculated the NH composite using the reconstructed temperature PCs from the regression module without re-scaling; the resulting composite was then re-scaled to match the instrumental NH composite. In the WA implementation (which has been adopted in all fresh calculations reported here), the reconstructed RPCs were re-scaled to match the variance of the target RPCs. The WA source code acknowledge a pers. comm. from Mann in April 2005 for this step, which is undocumented. The practical effect of re-scaling prior to calculating the NH composite (as opposed to the other order) in this particular case is to cause a slight compression of scale and to improve the replication of MBH results. However, the replication, as noted above, remains incomplete and there is a disquieting remaining discrepancy in 15th century results.

The pink curve shows the emulation results using 2 covariance PCs in the NOAMER network, a situation illustrated in MM05b and discussed in Wahl and Ammann Scenario 5. The left panel shown here uses the identical digital information as the corresponding Figure in MM05 (EE), but is colored and smoothed differently to match Wahl and Ammann. The blue curves shown on the right shows the reconstruction using 2 correlation PCs or 5 covariance PCs. In MM05b, we reported the following result:

If the data are transformed as in MBH98, but the principal components are calculated on the covariance matrix, rather than directly on the de-centered data, the results move about halfway from MBH to MM. If the data are not transformed (MM), but the principal components are calculated on the correlation matrix rather than the covariance matrix, the results move part way from MM to MBH, with bristlecone pine data moving up from the PC4 to influence the PC2….

If a centered PC calculation on the North American network is carried out …., MBH-type results occur if the NOAMER network is expanded to 5 PCs in the AD1400 segment (as proposed in Mann et al., 2004b, 2004d). Specifically, MBH-type results occur as long as the PC4 is retained, while MM-type results occur in any combination which excludes the PC4.

The only amendment that I would make to the above comments is to concede a little less than conceded above: that inclusion of the covariance PC4 (the second paragraph of the quotation) merely moves the reconstruction about halfway from the results using 2 covariance PCs to the MBH results.

When you think of the various calumnies thrown out by Wahl and Ammann (and re-cycled by Juckes), the similarities between the results in MM05b and Wahl and Ammann Scenario 5 are remarkable. The failure of Ammann and Wahl to reconcile these results amounts in my opinion to a distortion of the research record, a distortion being perpetuated by Juckes et al.

New Mann Paper

Michael E. Mann, 2006, Climate Over the Past Two Millennia, Annu. Rev. Earth Planet. Sci. 2007. 35:111–36 is online here , No signs so far of Mann, M.E. et al, Robustness of proxy-based climate field reconstruction methods, 2006 (accepted), which was cited by “Anonymous Referee #2” in the Burger-Cubasch review.

Funding generously provided by NSF here.