One more attempt to extract data. This the 39th email in my correspondence with Science and I still don’t have a complete record on either Esper et al 2002 or Osborn and Briffa 2006. And people wonder why I haven’t published more. Continues from here

Dear Dr Hanson,

Thank you for your continued efforts in trying to obtain data from Esper et al 2002 and Osborn and Briffa 2006. I regret that their unresponsiveness is making you handle this file many more times than it should have been. I acknowledge that a little more information came in from Osborn in April, but the majority of the requests that we discussed remain unresolved.

Esper et al 2002

1. Esper sent 13 of 14 chronologies. The 14th chronology (Mongolia) is still missing and no progress has been made on this in 2 months. This should be trivial to supply and I request that you take steps to obtain the missing chronology.

2. To date, Esper has sent 11 of 14 measurement files (10 in March and most recently Polar Urals in April), omitting Mongolia and two Graumlich sites, Boreal and Upperwright. I realize that there is a Mongolia file at WDCP (mong003.rwl), but the dates do not match Esper’s dates. Also, since Esper does not necessarily use all the data in a file, it is necessary to see the data as used. The two Graumlich sites are definitely not at WDCP and it is my understanding that Graumlich has lost the data. I presume that Esper was using his own version obtained prior to Graumlich losing the data, but Esper’s seeming inability to provide the measurement data for these two sites is unsettling. Perhaps it’s simply that he hasn’t considered your requests important enough to respond to. If that is the case, perhaps you could write to him in firmer tones than you have done so far.

I can see no valid reason why Esper cannot respond to items (1) and (2) within 48 hours or why you should not put him on such notice.

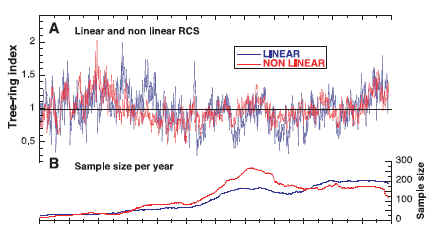

3. I requested information on Esper’s methodology by which he (a) distinguished between linear and nonlinear sites; and (b) decided on which data to remove from a site data set. You forwarded Esper’s answer in your most recent email, but his answer remains unresponsive and, indeed, even somewhat unsettling.

In the April 2006 email forwarded by you, Esper cited Esper et al 2003 http://www.wsl.ch/staff/jan.esper/publications/TRR_2003.pdf as authority both for the supposed distinction between linear and nonlinear sites and the removal of data. Unfortunately, that article contains no discussion whatever on the distinction; indeed it does not even mention “linear” and “nonlinear” sites. The article does refer to “meta information”, but not in a way that is germane to replicating the calculation. Accordingly, I re-iterate my previous request for an operational definition of “linear” and “nonlinmear” sites as used to produce the results in Esper et al 2002. Source code would be fine.

Secondly, Esper said that the purpose of removing data was to avoid a “biased chronology”, again citing Esper et al 2003 as authority. In this case, the comments in the “authority” were, if anything, more unsettling than the email. Esper et al 2003 said on the subject of removing data:

Before venturing into the subject of sample depth and chronology quality, we state from the beginning, “more is always better”. However as we mentioned earlier on the subject of biological growth populations, this does not mean that one could not improve a chronology by reducing the number of series used if the purpose of removing samples is to enhance a desired signal. The ability to pick and choose which samples to use is an advantage unique to dendroclimatology.

To the extent that this is an accurate description of the methods employed in Esper et al 2002, it does not justify removing data on the grounds of avoiding bias. Indeed, it seems to be a recipe for bias in the direction of the “desired signal” – making one wonder what was the “desired signal” and, on what grounds, a signal should be “desired”.

In responding to the issue of removal of data, Esper introduced his answer with the phrase “as described”, implying that there was some prior description of the removal of data. There is no mention of the removal of data in Esper et al 2002 and the issue came up only after I was able to inspect source data that you obtained. Again I note that Esper’s answer was unresponsive and I reiterate my request for a replicable methodology by which Esper’s removal of data can be replicated.

Osborn and Briffa

I acknowledge and appreciate the gridcell data which you obtained from Osborn and Briffa. I am unimpressed by their explanation and feel that it deserves wider attention as it may shed useful light on the quality of the HadCRU2 data set. I recognize that you requested that their answer remain confidential. I have requested a confidentiality release from Osborn and Briffa, but to no avail. I can see no valid reason for confidentiality. I request that you re-consider the basis for confidentiality and provide an answer that is not confidential.

I requested 4 measurement data sets (Polar Urals, Tornetrask, Taymir and Athabaska). Osborn and Briffa appear to have refused this data and you referred me to the “original authors” or the original journals. In the case of three of these data sets (Polar Urals, Tornetrask, Taymir), the “original author” was Briffa (2000). You have presented me with a distinction without a difference. While I have contacted the “original author” (Briffa) for this information, there is little prospect that he will provide the information except under a direction from Science. I see no reason why Science should countenance an author hiding behind a prior publication in a lesser journal with weaker archiving policies. I request that Science take a broad view of its data archiving policies in this case and that Science does not permit authors to take legalistic approaches to avoid supplying data. I ask that you require authors, who have used results from a “non arms length” publication which has not been properly archived, to meet Science’s policies for all non-arms length data. I therefore request that you require Osborn and Briffa to produce measurement data from Briffa (2000), used to produce results applied in Osborn and Briffa 2006.

Thompson

I note that no progress seems to have been with the Thompson data and hope that you are continuing your efforts on this front as well.

Once again, I appreciate your efforts. It is too bad the various authors have responded with bits and pirces of their data, rather than with complete information.

Regards, Steve McIntyre

Update: On May 19, Hanson reverted with data from Lisa Graumlich for two series. As to my requests for the measurement data used to create the Briffa (2000) chronologies used in Osborn and Briffa 2006, he stated:

If you require data from papers published elsewhere, you should contact those authors and journals.

That would be, uh, Briffa. He also stated that I was not to reproduce emails from him about data provenance even though they were coming from him in his official capacity.

On May 19, I sent the following letter:

Dear Dr Hanson,

Thank you for finally supplying Esper’s version of the two foxtail series. I note that these have also been archived at WDCP in the past week, although the versions archived there differ somewhat from Esper’s versions. Oddly enough, in each case, Esper’s version includes a series that is not included in what Graunlich archived and in the case of the Upper Wright series, Esper did not use all the series.

Osborn and Briffa

I regret the position that you’ve taken here – that Science has no obligation to require them to archive their measurement data. Bwlow is a graphic illustrating the difference in the “chronology” archived by Graumlich this week for the two foxtails sites, as compared with the “chronology” sent in by Esper. The methodologies for calculating the “chronology” obviously lead to quite different results from the same or virtually the same data. Thus, in the tree ring field, where quite different “chronologies” can be obtained from the same measurement data, one needs to be able to evaluate the measurement data in order to test for robustness of the conclusions. This is presently impossible for the Osborn and Briffa paper, which you published. While I will maintain confidentiality on the wording of your response and appreciate your efforts, I don’t consider that any confidentiality attaches to the lack of success in my attempts to obtain this data through Science despite your considerable efforts. Here considerable blame remains with the original authors.

Mongolia

The Tarvagatny Pass version archived at WDCP is obviousl similar to Esper’s version, but it is not the same. In particular, the illustration in the SI to Esper et al 2002

shows that Esper used Mongolia values back to 800, while the WDCP archive includes values only after 900. Since there are relatively few values in the 800-900 period, the Mongolia values actually used by Esper remain relevant. Once again I re-iterate my request for the Mongolia version used by Esper as well as the site chronology generated by Esper – both of which have been referred to in virtually every communication.Esper Methodology

I take it from your last communication that you do not intend to obtain or require any clarification or further details from Esper regarding his methodology – even on the matter of how he decided which series to include or exclude. Is this correct or is this in process?Thompson

Again, I have not heard from you regarding Thompson. Is this simply a dead topic? Should I conclude that Science will making no efforts to require Thompson to archive data beyond what is presently available?Thank you as usual for your consideration.

Regards, Steve McIntyre

No response was ever received to this letter.

Request re Briffa 2000

Since Science said to contact the “other authors” in respect to measurement data, on April 28, 2006 , I wrote to Tim Osborn as follows:

Science said that you did not directly use the measurement data for Polar Urals, Tornetrask, Yamal and Taimyr, but chronologies previously published and therefore took no responsibility for obtaining this data, directing me back to you or to the original journal. While I disagree with this decision and may pursue it with Science if necessary, to simplify matters would you voluntarily provide the measurement data used for the above sites in calculating the chronology in Briffa [2000]. Thanks, Steve McIntyre

On May 23, Osborn responded:

Steve – Science are correct to say that I “did not directly use the measurement data for” those sites. Not only did I not use them, I don’t actually have a copy of them. So I cannot help you. Tim

OK, he didn’t have the data, but that didn’t mean that Briffa didn’t have the data. So on May 23, I wrote to Briffa one more time:

Dear Dr Briffa,

On April 28, 2006, I asked Tim Osborn for the measurement data for Polar Urals, Tornetrask, Yamal and Taimyr sites, supporting the chronologies used in Osborn and Briffa [2006]. Osborn says that he does not have the data, but did not say that you didn’t have the data. Do you have the data? If so would you please comply with the request below and voluntarily provide the measurement data used in Briffa 2000, and relied upon in Osborn and Briffa 2006, for these sites.

Thank you for your attention. Steve McIntyre

On May 28, 2006, Briffa replied:

Steve these data were produced by Swedish and Russian colleagues – will pass on your message to them]

cheers, Keith

That was the last I heard from him. By this time, I’d exchanged over 40 emails with Science and others and figured I’d done all that I could do.